Data lineage is the record of where data comes from, how it moves through systems, and what happens to it along the way. It is like a version control for data flows: every transformation, join, filter, and aggregation is tracked so that when something goes wrong downstream (a dashboard shows the wrong number, an ML model produces a strange prediction, a regulator asks how a decision was made), you can trace the problem back to its source.

The concept has been around for decades. What changed is the scope. In the 2026 Gartner Magic Quadrant for Data and Analytics Governance Platforms, lineage is no longer just about tracking database columns through ETL jobs. The scope now extends to ML features, model versions, AI-generated outputs, and unstructured data.

Gartner predicts that by 2027, 60% of data governance teams will prioritize governing unstructured data to support generative AI use cases. For data engineering teams, this means lineage is expanding from a compliance tool into a core piece of AI infrastructure.

This article covers what data lineage is, the different types that matter, how it supports AI governance and EU AI Act compliance, and where off-the-shelf tools stop and custom engineering starts.

Summary

- Data lineage tracks data from origin through every transformation to its final consumption point. It answers three questions: where did this data come from, what happened to it, and what depends on it.

- The scope of lineage is expanding. The 2026 Gartner MQ for D&A Governance now requires platforms to track lineage across structured data, unstructured data, ML models, and AI-generated outputs.

- AI model lineage is becoming a regulatory requirement. The EU AI Act mandates documented traceability for high-risk AI systems. Organizations deploying AI in healthcare, finance, and hiring need lineage from training data through model output.

- Off-the-shelf lineage tools cover standard connectors. Custom engineering is needed for proprietary ETL, SCADA/IoT data flows, and ML pipelines that no catalog natively traces.

What is data lineage?

A complete lineage record connects every upstream source to every downstream consumer, creating a map that teams use for debugging, impact analysis, compliance audits, and root cause investigation.

In practical terms, lineage answers three questions.

First: where did this data come from? When a quarterly revenue number looks off, lineage tells you which source tables, transformations, and aggregation rules produced it.

Second: what happened to it along the way? Every filter, join, type cast, and business logic rule is recorded so you can identify where a value changed.

Third: what depends on it? If a source schema changes, lineage shows every downstream report, model, and application that will be affected, before you break them.

Types of data lineage

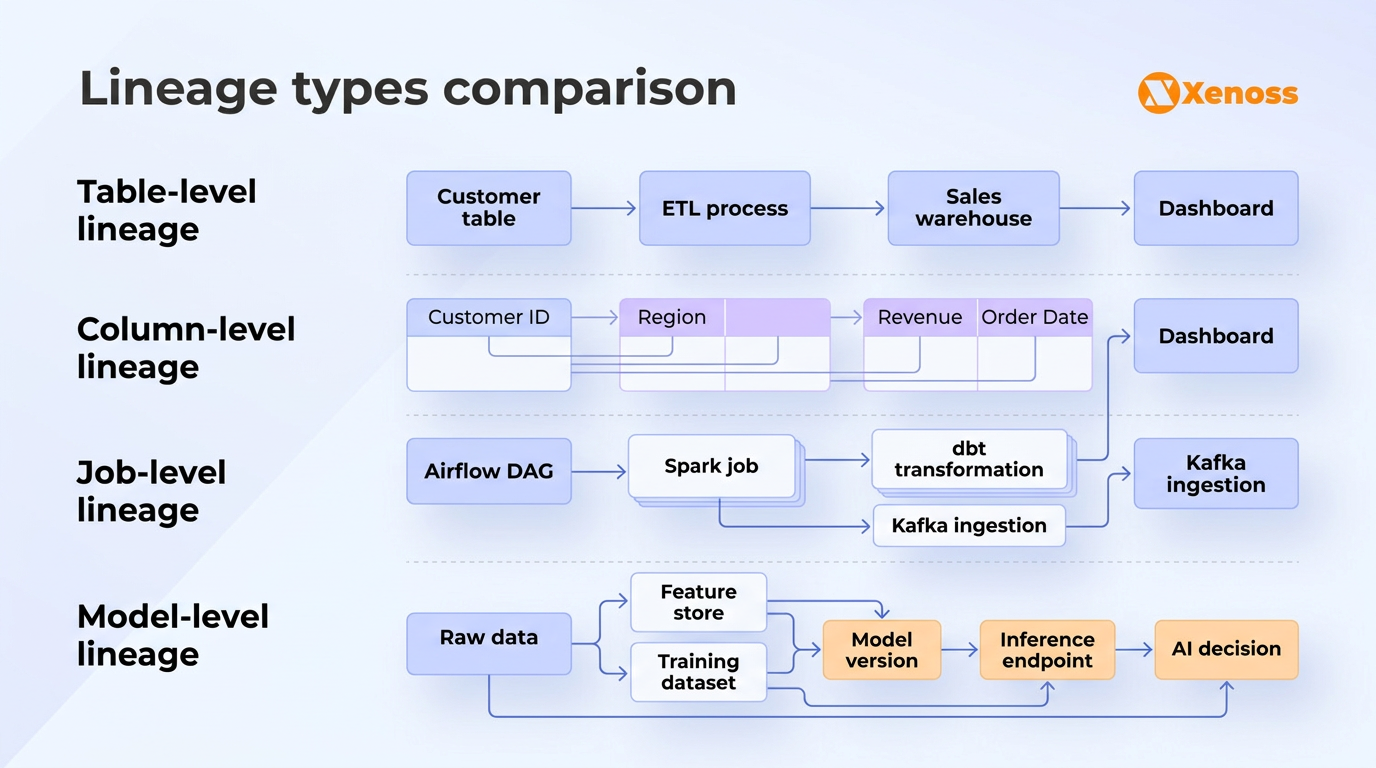

Lineage operates at different levels of granularity, and each level serves different teams and purposes.

| Type | What it tracks | Who uses it | Example |

|---|---|---|---|

| Table-level | Relationships between source and destination tables across systems | Data architects, platform teams | Orders table in Postgres feeds into orders_cleaned in Snowflake |

| Column-level | How individual columns flow through transformations | Data engineers, analysts debugging metric discrepancies | revenue_usd is computed from amount * exchange_rate, where exchange_rate comes from the FX table |

| Job-level | Which pipeline jobs, DAGs, or scripts produce which outputs | DataOps teams, on-call engineers | Airflow DAG daily_revenue_rollup reads from 3 tables and writes to finance.revenue_daily |

| Model-level | Which datasets and features were used to train, validate, and serve ML models | ML engineers, compliance teams | Churn prediction model v2.3 trained on customer_features_v4 snapshot from March 1 |

Most data governance platforms handle table-level and column-level lineage through automated parsing of SQL queries and ETL job definitions.

Job-level lineage requires integration with orchestration tools like Airflow, dbt, or Prefect.

Model-level lineage, the newest and most complex category, requires tracking across feature stores, experiment tracking systems, and model registries. This is the frontier where most off-the-shelf tools are still catching up.

Data lineage for AI: Why model-level tracking changes everything

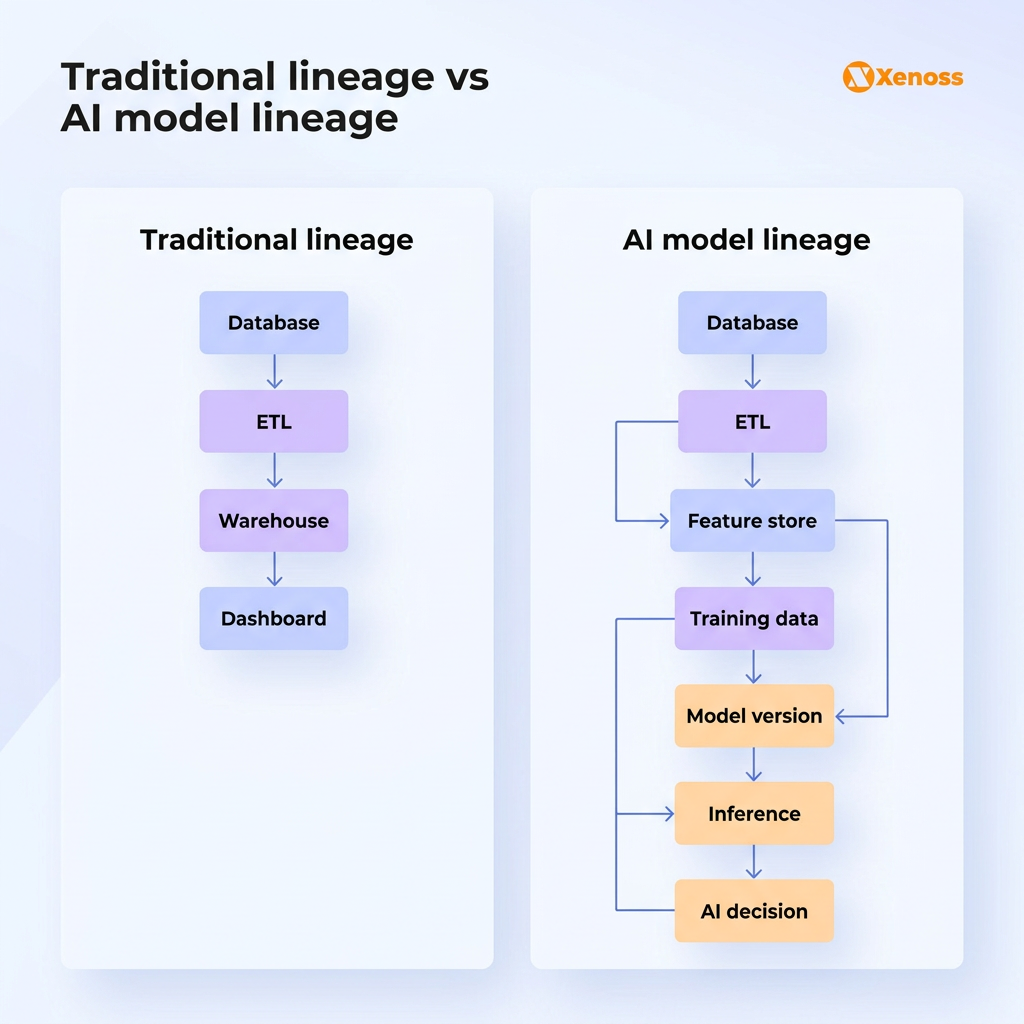

Traditional data lineage tracks data from source to report. AI model lineage extends that chain further: from source data through feature engineering, model training, and inference, all the way to AI-generated decisions.

Gartner’s 2026 D&A Governance MQ makes this explicit. Governance platforms are now expected to support “analytics model governance” and track lineage for AI assets. The scope of lineage has expanded from a database column to an ML feature to an AI-generated recommendation.

This expansion matters for four specific reasons.

- Debugging model drift. When an ML model’s accuracy degrades, the cause is almost always an upstream data problem: a source schema change, a feature pipeline that started producing nulls, or a training dataset contaminated by a data quality issue.

Without model-level lineage connecting the model version back to the specific training data snapshot and feature definitions, debugging becomes guesswork. With lineage, you can trace the degradation to the exact data change that caused it.

- Regulatory compliance. The EU AI Act, which entered enforcement in phases starting February 2025, requires providers of high-risk AI systems to maintain technical documentation that includes traceability of training, validation, and testing datasets.

In banking, healthcare, insurance, and hiring, regulators can ask: “Which data was used to train this model? How was it transformed? Was any PII involved?” Without model-level lineage, answering these questions means weeks of forensic investigation. With it, the answer is a query against the lineage graph.

- Feature store governance. Feature stores (Feast, Tecton, Databricks Feature Store) centralize feature computation for ML workloads. But features derived from features create dependency chains that are invisible without lineage. When a base feature changes (say, the definition of “active user” shifts from 30-day to 14-day activity), every downstream feature and every model consuming it needs to be re-evaluated. Lineage makes these dependency chains visible and auditable.

- Governance of AI-generated outputs. As agentic AI systems scale, lineage needs to cover not just what data a model was trained on, but what outputs it generates and which decisions those outputs inform.

A Gartner survey of 360 organizations found that organizations with AI governance platforms are 3.4 times more likely to achieve high effectiveness in AI governance. Lineage is the infrastructure that makes governance enforceable rather than aspirational.

Why this matters: Spending on AI governance platforms is projected to reach $492 million in 2026, according to Gartner. It is a budget line item. Organizations deploying AI in regulated industries without model-level lineage are accumulating compliance risk that will eventually materialize as audit failures, fines, or forced model decommissioning.

Where off-the-shelf lineage tools stop

Modern data governance platforms like Atlan, Collibra, Informatica, and OpenMetadata offer automated lineage through SQL parsing, connector-based metadata extraction, and integration with dbt, Airflow, and Spark. For organizations running standard cloud data stacks (Snowflake + dbt + Airflow, or Databricks + Delta Lake), these tools cover the majority of lineage needs.

They start falling short in specific enterprise scenarios.

Proprietary ETL and transformation logic. Large enterprises run custom ETL frameworks built over years, often with transformation logic embedded in stored procedures, Java applications, or proprietary scripting languages that no lineage tool parses natively. Extracting lineage from these systems requires custom parsers that understand the specific transformation semantics.

SCADA and IoT data flows. Manufacturing and energy companies ingest sensor data through SCADA systems, industrial protocols (OPC-UA, MQTT, Modbus), and custom data collectors. The lineage from a sensor reading on an oil platform to a predictive maintenance model’s input is invisible to any off-the-shelf governance tool. Tracing it requires custom integration engineering that maps the physical data flow through industrial systems into the lineage graph.

Custom ML pipelines. Teams running custom ML training pipelines outside of managed platforms (SageMaker, Vertex AI, Databricks MLflow) need custom instrumentation to capture which training data was used, which features were computed, and which model version resulted. OpenLineage provides a framework for this, but the integration work is specific to each pipeline architecture.

Cross-system lineage in hybrid environments. When data flows from an on-premises Oracle database through a custom middleware layer into a cloud-based data lake, then into a feature store, and finally into an ML model, no single lineage tool covers the entire chain. Enterprise lineage in hybrid environments is almost always a custom engineering project that stitches together metadata from multiple systems into a unified lineage graph.

Why this matters: The lineage gaps that matter most are the ones that involve your most critical data flows. Ironically, these are also the flows most likely to be custom-built and therefore invisible to off-the-shelf tools. For regulated industries, the lineage you cannot trace is exactly the lineage a regulator will ask about.

Data lineage best practices for enterprise environments

Start with the data flows that regulators care about. Trying to map lineage across every table and pipeline in the organization is a multi-year project that rarely finishes. Start with the regulated flows: financial reporting data, PII-containing pipelines, AI training data for high-risk models. These are the flows auditors will ask about, and they deliver immediate compliance value.

Automate lineage capture at the pipeline level. Manual lineage documentation goes stale the moment a pipeline changes. Use tools that extract lineage automatically from SQL parsing (dbt, Atlan, OpenMetadata) and pipeline metadata (Airflow, Prefect). For custom ETL, instrument pipelines to emit OpenLineage events so that lineage stays current without manual maintenance.

Connect lineage to data quality. Lineage without quality monitoring tells you where data came from but not whether you can trust it. Connecting lineage to data quality metrics (freshness, completeness, uniqueness, schema conformance) lets teams trace a bad number not just to a source table but to the specific quality failure that caused it.

Extend lineage to ML model assets. Track which training data, feature definitions, and hyperparameters produced each model version. Store this metadata in a model registry (MLflow, Weights & Biases, custom) and connect it to your lineage graph. This turns compliance from a documentation exercise into a queryable system.

Bottom line

Data lineage used to be a compliance checkbox: document where data comes from, satisfy the auditor, move on. That version of lineage is no longer sufficient. The 2026 Gartner MQ for D&A Governance explicitly requires platforms to trace lineage across structured data, unstructured data, ML models, and AI-generated outputs. The EU AI Act makes model-level lineage a legal requirement for high-risk systems. Spending on AI governance is approaching half a billion dollars this year.

For data engineering teams, this means lineage is no longer a governance team’s problem. It is an infrastructure requirement that sits alongside data pipelines, feature stores, and model registries. The practical question is not whether to implement lineage, but how deep it needs to go and where off-the-shelf tools can cover the standard flows versus where custom engineering is needed to trace through proprietary systems, industrial protocols, and ML-specific workflows.

Start with the regulated data flows. Automate capture where tools support it. Build custom for the critical flows where they don’t. And extend lineage to ML assets before the auditor asks for it.