A company running a large Oracle Database environment on AWS is typically paying three separate penalties without knowing it: a 2:1 licensing ratio that doubles the Oracle license count, egress fees that compound with data volume, and standard compute rates on infrastructure that has no awareness of Oracle’s query patterns. Moving the same workload to OCI eliminates all three. But OCI isn’t the right answer for every enterprise, and the decision is more nuanced than Oracle’s marketing suggests.

OCI compute is 57% cheaper than AWS EC2 for equivalent configurations. Block storage is 78% cheaper than AWS EBS. Data egress costs 13 times less, with 10 TB free globally every month. And for organizations already paying Oracle support fees, OCI has a financial lever that no other cloud offers: a rewards program that can reduce your Oracle support bill to zero.

This article covers the support math, the real AI workload cost differences, and the infrastructure details that compound at scale. We also cover where AWS is genuinely the better fit, because the answer isn’t always OCI.

Key takeaways

- The licensing and support angle is underappreciated: OCI’s 1:1 BYOL ratio combined with Oracle Support Rewards (up to $0.33 per dollar spent on OCI applied against your support bill) means large Oracle shops can recover significantly more value from OCI than the compute price gap suggests.

- Database performance isn’t close: Oracle Autonomous Database on Exadata delivers 25x lower IO latency than AWS RDS for Oracle and scan rates 384x faster. These are hardware-level differences.

- AI costs depend on scale: A production Llama 2 70B deployment on 4x A100s runs $8,838/month on OCI versus $13,570 on AWS. For managed model inference via API, AWS Bedrock is still the faster path.

- OCI’s multicloud model changes the decision: Oracle Database@AWS (GA since July 2025) and Oracle Database@Azure (33 regions) mean you don’t have to choose between platforms. Oracle’s multicloud database revenue grew 817% year-over-year in Q2 of fiscal 2026.

OCI vs AWS

Pricing reflects published list rates as of Q1 2026; actual costs vary by region, contract, and commitment tier.

| Category | OCI | AWS |

|---|---|---|

| Compute pricing | 57% cheaper than AWS EC2 (same spec, same region) | Higher list price; commitment discounts require 1-3yr lock-in |

| Block storage | 78% cheaper than AWS EBS; up to 1.5x IOPS included | Higher per-GB; IOPS billed separately |

| Data egress | 10 TB/month free globally; 13x cheaper beyond that | Charges from byte 1; 10-30% regional premium outside US |

| Flexible compute | Scale by 1 OCPU + 1 GB independently; prevents overprovisioning | Fixed instance types only; often forces overprovisioning |

| Oracle Database | Autonomous DB + Exadata on purpose-built hardware | Oracle on RDS, general-purpose infrastructure, no Exadata |

| BYOL licensing | 1:1 core factor; Support Rewards reduce support bill up to 100% | 2:1 core factor, doubles Oracle license cost vs OCI |

| GPU for AI (large) | OCI Supercluster: up to 131,072 B200 GPUs with RDMA InfiniBand | Broader GPU lineup, more regions, mature managed tooling |

| Managed AI | Expanding; OCI Generative AI service available | Bedrock + SageMaker, most complete managed AI stack |

Database workloads: where OCI excels

Running Oracle Database on AWS and on OCI are not the same product. The infrastructure and performance are different, and for organizations with existing Oracle investments, the economics are substantially different. This is worth understanding in detail because it’s the most consequential part of the platform decision for most enterprises.

Oracle Exadata X9M on OCI delivers sub-19-microsecond IO latency, 25 times faster than AWS RDS for Oracle and 50 times faster than Azure SQL. Scan rate is 384 times faster than Amazon RDS. The Exadata X8M supports 12 million read IOPS and 5.6 million write IOPS; the maximum an AWS RDS instance supports is 80,000. These differences are architectural. Exadata’s smart processing moves SQL execution closer to the data, drastically reducing data movement for both transactional and analytical workloads.

Oracle Autonomous Database adds self-tuning, self-patching, and automatic indexing on top of that hardware. Scaling CPU is an online operation with no downtime; AWS RDS still requires planned maintenance windows for vertical scaling.

For financial systems, operational databases, and high-volume transactional workloads, this combination of hardware and software produces real application throughput differences that show up in user experience and SLAs.

BCC Group ran Oracle’s ONE Platform on both OCI and AWS across NYSE, LSE, and Frankfurt exchange regions in 2025. Their published benchmark found OCI consistently faster and more reliable for latency-sensitive market data delivery, citing OCI’s non-oversubscribed network design with guaranteed, SLA-backed bandwidth and standardized pricing across regions as key structural advantages.

Why it matters: If you’re running Oracle Database on AWS today, you’re paying AWS infrastructure pricing, absorbing a 2:1 licensing penalty, and running on general-purpose hardware.

Moving Oracle workloads to OCI cuts costs and upgrades performance simultaneously. Migrating to an AWS-native database avoids the licensing cost but requires application refactoring for Oracle-specific features, a risk that’s often larger than it looks.

The licensing and support math

The two financial levers that make the biggest difference for Oracle-heavy enterprises, BYOL core factor and Oracle Support Rewards, rarely get proper treatment. Together they can represent more value than the compute discount entirely.

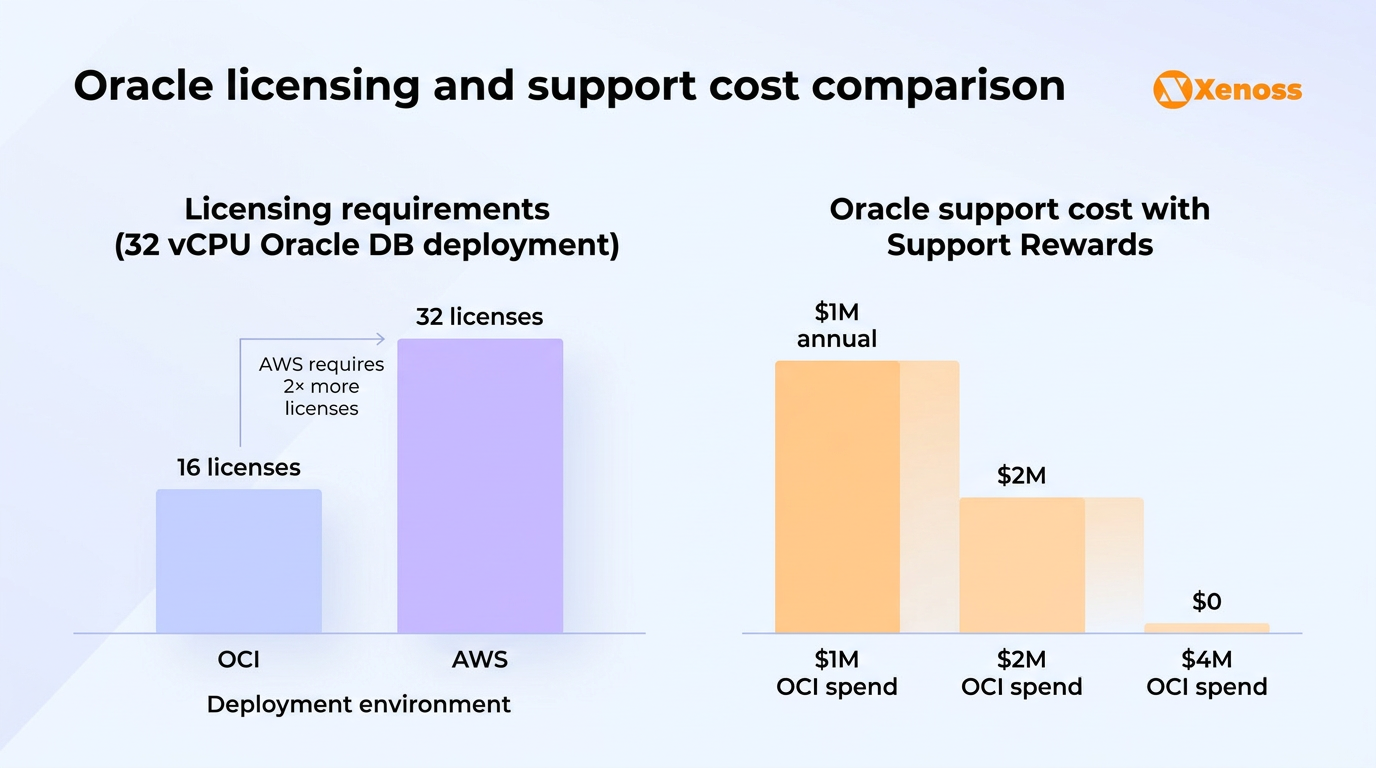

BYOL core factor: 1:1 vs 2:1

OCI uses a 1:1 core factor for Bring Your Own License deployments: one Oracle processor license covers one OCPU, where one OCPU equals two vCPUs. AWS enforces a 2:1 ratio, meaning one license covers only half an EC2 vCPU.

For an enterprise running a 32-vCPU Oracle Database instance: on OCI, that’s 16 OCPUs requiring 16 licenses; on AWS, that same workload requires 32 licenses. The difference compounds with cluster size.

For organizations already holding Oracle Database Enterprise Edition licenses at roughly $47,000 each, this alone drives six-figure annual differences on medium-scale deployments.

Oracle Support Rewards: a benefit that no other cloud offers

Oracle’s Support Rewards program lets enterprises earn credits against their Oracle on-premises support bill based on OCI consumption. Standard customers earn $0.25 for every dollar spent on OCI. Customers on an Unlimited License Agreement (ULA) earn $0.33 per dollar. Those credits apply directly to Oracle technology support fees, for products including Oracle Database, Oracle WebLogic, and related middleware, down to zero.

Oracle’s own documentation: an enterprise with a $1M annual Oracle support bill that spends $2M on OCI earns $500K in rewards, cutting the support bill in half. Spending $4M on OCI wipes the support bill entirely. For large Oracle shops paying $500K to $2M+ in annual support fees, this program changes the ROI calculation considerably, and it doesn’t exist on AWS, Azure, or Google Cloud.

Combining both levers: an enterprise with a 64-vCPU Oracle Database deployment and $800K in annual Oracle support costs would need twice as many licenses on AWS as on OCI, while also receiving no support credits. On OCI, the same deployment requires half the licenses, and a proportionate OCI spend can eliminate a significant portion of the support bill. The combined effect routinely outweighs the headline compute savings.

Why it matters: The BYOL ratio and Support Rewards are specific to Oracle workloads, so they don’t factor into a generic cloud cost comparison. But for enterprises with Oracle Database at the center of their stack, these two mechanisms alone can justify the platform decision before you’ve compared a single compute instance.

AI infrastructure: real workload costs

The AI infrastructure comparison between OCI and AWS depends heavily on what you’re building. For large-scale custom model training and inference on proprietary models, OCI’s economics are compelling. For managed access to foundation models and integrated ML pipelines, AWS is more capable today. The mistake is conflating the two.

For a concrete production benchmark: running Llama 2 70B on 4x A100 GPUs with 15 TB of monthly egress costs $8,838/month on OCI versus $13,570/month on AWS, a 35% difference driven by a combination of lower A100 instance pricing and OCI’s free egress tier. The OCI A100 VM runs at $2.95/hour; the comparable AWS p4d configuration runs at $4.10/GPU. That gap compounds with cluster size: the larger the GPU deployment, the more OCI’s egress advantage adds up.

At the cluster scale, OCI Supercluster supports up to 131,072 NVIDIA B200 GPUs, 65,536 H200s, and 32,768 A100s within a single cluster connected by RDMA InfiniBand networking. That architecture is built for distributed training at a scale that’s relevant to large language model development and inference infrastructure, not typical enterprise ML workloads.

For Xenoss clients exploring AI infrastructure for large-scale model fine-tuning, OCI’s cluster performance-to-cost ratio is often the deciding factor. Our analysis of the OpenAI-Oracle Stargate expansion covers the broader infrastructure investment direction Oracle is taking.

Where AWS holds a clear lead: the managed layer. Bedrock provides serverless access to foundation models from Anthropic, Meta, Mistral, and NVIDIA without infrastructure management. SageMaker handles end-to-end ML workflows with tooling that OCI’s generative AI service doesn’t match in maturity or breadth. For teams building applications on top of foundation models rather than training them, AWS is faster to production.

Why it matters: Before you compare GPU specs, be clear about what your team is doing. Running inference on a large proprietary model at scale: OCI wins on cost. Building an application that calls foundation models via API: AWS Bedrock is the more complete platform. Many enterprises assume they need the former when their actual workload is the latter.

Infrastructure economics: what compounds at scale

The per-unit pricing differences between OCI and AWS are meaningful. What’s less obvious is how several of OCI’s structural pricing decisions interact to create larger savings at scale.

OCI flexible compute shapes vs AWS fixed instance types

OCI lets you configure compute instances in 1 OCPU and 1 GB increments, scaling CPU and memory independently. AWS requires selecting from predetermined fixed instance types. In practice, that means AWS customers frequently overprovision: you need 10 GB of memory and 3 vCPUs, so you pick the next-larger instance type and pay for 16 GB and 4 vCPUs. OCI’s flexible shapes eliminate this systematically, right-sizing every instance to the workload. Across a fleet of hundreds of instances, this prevents a consistent layer of waste that doesn’t show up in per-instance comparisons.

Consistent global pricing vs AWS regional premiums

OCI charges the same rate for every service in every region globally, public, sovereign, and dedicated. AWS and Azure charge 10-30% more in non-US regions: London, Frankfurt, Tokyo, and Sao Paulo all carry regional premiums. For the same 10 TB of egress, AWS in Zurich costs 17% more than AWS in Northern Virginia. For multiregional enterprises running workloads in Europe or Asia-Pacific, this differential compounds across every service in every region, and it’s invisible in single-region pricing comparisons.

Egress: the cost that scales with success

OCI includes 10 TB of free outbound data transfer per month globally. AWS offers 100 GB free, then charges from byte one. At 50 TB/month, OCI charges roughly $400-800 (40 TB above the free tier at $0.01-0.02/GB); the same workload on AWS runs $3,900-4,300 at tiered rates. For data-intensive applications, analytics pipelines, model serving, large-scale API responses, egress is often the largest single cloud cost, and it’s the one most teams underestimate at architecture time. Our data engineering trends overview covers how this shapes multi-cloud architecture decisions.

Why it matters: None of these individual factors is dramatic in isolation. Flexible shapes save 10-15% compared to overprovisioned fixed instances. Global pricing consistency saves 10-30% in non-US regions. Free egress saves thousands per month at scale. Together they produce a compounding TCO gap that grows with workload size. That’s why the cost difference between OCI and AWS often looks larger in production than in pre-deployment estimates.

Multicloud: the option that removes the binary choice

Oracle’s fastest-growing business in fiscal 2026 is the multicloud model, with database revenue up 817% year-over-year in Q2.

Rather than migrating off one platform onto another, enterprises are running Oracle Database inside their existing AWS or Azure environment through private interconnect agreements.

Oracle Database@AWS became generally available in July 2025 in US East and US West, with three additional regions added in December 2025 including Ohio, Frankfurt, and Tokyo, and 17 more in the roadmap.

Oracle Database@Azure is available in 33 regions. Both follow the same model: Oracle manages the database software and hardware inside the hyperscaler’s data center; the hyperscaler provides the connectivity and data center infrastructure.

Oracle Multicloud Universal Credits, launched in October 2025, let enterprises purchase a single credit pool usable across OCI, Oracle Database@AWS, @Azure, and @Google Cloud.

For a data engineering team running Redshift, S3, and SageMaker on AWS, Oracle Database@AWS means adding Oracle Autonomous Database with Exadata-class performance inside the same environment, billed through the same AWS account, without managing a separate cloud relationship. The performance gap on the database layer closes; the egress pricing still follows AWS rates.

Why it matters: The practical implication is that ‘which cloud should we choose for Oracle’ is increasingly the wrong frame. The multicloud model lets engineering teams pick the database platform on its merits and run it wherever the rest of the stack lives. Most of the migration risk goes away when you’re not migrating the application tier.

When to choose OCI vs AWS

There’s no universal answer, but the decision follows consistent patterns across the workloads.

Choose OCI when:

- Your applications depend on Oracle-specific features: PL/SQL, Oracle RAC, Data Guard, Exadata performance, or Autonomous Database capabilities.

- You have significant Oracle BYOL licenses and Oracle support contracts. The 1:1 core factor and Support Rewards combined can cut total Oracle costs more than compute savings alone.

- Your workload has high monthly egress volumes. The free 10 TB tier and lower per-GB rates produce compounding savings at scale.

- You need large GPU clusters with RDMA networking for custom model training, particularly at the scale of four or more A100s or H100s where OCI’s economics shift favorably.

- You operate in multiple regions and want consistent pricing globally, without regional premiums.

Choose AWS when:

- Your team is building on AWS-native services: SageMaker, Bedrock, Lambda, Glue, or Redshift form the backbone of your architecture.

- You need access to foundation models via managed API (Bedrock) and don’t plan to train or fine-tune at scale.

- You require coverage in 25+ AWS regions or need geographic redundancy across more points of presence than OCI currently offers.

- Your Oracle Database dependency is low and you’re comfortable running Oracle on RDS or migrating to a cloud-native database engine.

Use the multicloud model when:

- You want Exadata-class database performance without a full platform migration. Oracle Database@AWS and Oracle Database@Azure give you Oracle’s database technology inside your existing cloud.

- Your team has deep AWS expertise and operational continuity matters more than the infrastructure savings from a full OCI migration.

Bottom line

OCI’s case for Oracle-centric enterprises is stronger than most comparisons convey, and the two financial levers that make it strongest, the 1:1 BYOL core factor and the Oracle Support Rewards program, are the pieces that most articles skip. When you combine lower compute and storage rates, free egress, flexible compute shapes, consistent global pricing, and the ability to earn $0.25-$0.33 per OCI dollar back against your Oracle support bill, the TCO gap between OCI and AWS for Oracle-heavy workloads is substantially larger than a compute price comparison suggests.

AWS is still the better platform for teams building on managed AI services, native AWS tooling, or architectures that don’t depend on Oracle Database. And for organizations that want Oracle’s database performance without a platform migration, Oracle Database@AWS and the multicloud model increasingly resolve the trade-off. Oracle’s 817% multicloud growth in Q2 of fiscal 2026 reflects enterprises discovering that the either-or choice is largely gone. The right decision starts with your actual workload, your Oracle license position, and your egress profile, not vendor preference.

If you’re building out or inheriting a cloud architecture with Oracle at the center of it, the cost model repays modeling properly. The numbers are often more favorable than teams expect when they run them completely.