Acceptance criteria define the conditions a feature, system, or model must meet before stakeholders consider it done. They are the contract between what the team builds and what the business expects to receive. When acceptance criteria are specific and testable, teams ship with confidence. When they are vague, projects drift into rework, scope creep, and missed deadlines.

The cost of getting this wrong is well documented. Despite global IT spending tripling to $5.6 trillion since 2005, software project success rates have not improved in two decades. The U.S. alone has spent over $10 trillion on failed IT projects in that period. Requirements problems are at the center of this failure: only 35% of projects worldwide finish successfully, with 12% of total project investment lost to poor performance

For AI and machine learning projects, the stakes are even higher. A systematic mapping study on requirements engineering for AI found that 87% of AI projects never make it into production, with requirements specification cited as one of the most prevalent challenges. Traditional acceptance criteria formats assume deterministic, binary outcomes. AI models produce probabilistic results that require a fundamentally different approach to defining “done.”

This article covers the standard formats every team should know, then goes where most guides stop: how to write acceptance criteria for ML models, data pipelines, and enterprise AI systems where the rules of “pass or fail” don’t apply the same way.

Summary

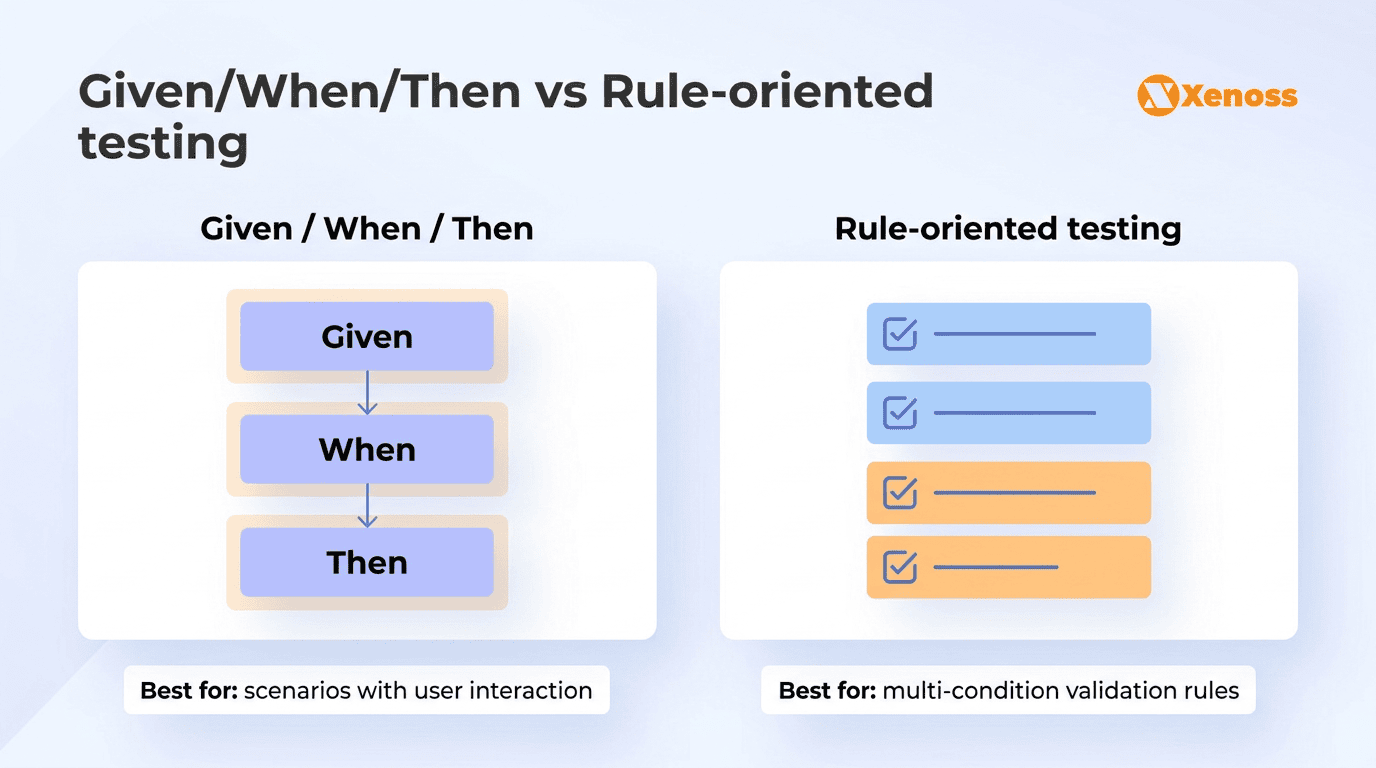

- Acceptance criteria are the testable conditions that define when a user story, feature, or system is complete. The two most common formats are Given/When/Then (scenario-based) and rule-oriented checklists.

- For AI and ML projects, traditional binary pass/fail criteria don’t work. Teams need threshold-based acceptance criteria across four layers: business outcomes, model performance, data quality, and operational readiness.

- Vague acceptance criteria are the single largest driver of project rework. 50% of all rework traces directly to requirements issues, and 80% of respondents in industry surveys report spending half their time on rework caused by unclear requirements.

- AI-assisted tools for requirements validation are showing early promise, with research indicating 40 to 65% reductions in requirements-related defects for organizations using AI-powered validation.

What is acceptance criteria in software development

In agile development, acceptance criteria are attached to user stories and serve three purposes:

- They define scope: what the feature includes and, just as importantly, what it does not.

- They provide the basis for testing: QA teams derive test cases directly from the acceptance criteria.

- They align expectations: when a developer and a product owner disagree on whether a feature is complete, the acceptance criteria are the arbiter.

Good acceptance criteria are specific enough to verify, independent of implementation details, and written from the user’s or system’s perspective rather than from the developer’s. They describe what the system should do, not how it should do it.

Why this matters: Without clear acceptance criteria, development teams are building to assumptions. More than 80% of project participants feel the requirements process does not articulate the needs of the business, and only 23% of respondents say project managers and stakeholders agree on when a project is done. Acceptance criteria exist to close that gap.

How to write acceptance criteria: formats and examples

Two formats dominate in practice. Most teams use one or both, depending on the complexity of the feature.

Given/When/Then (scenario-based format)

The Given/When/Then format, rooted in behavior-driven development (BDD), structures each criterion as a scenario with a precondition, an action, and an expected result. It reads like a test case, which makes it easy to automate and unambiguous to verify.

Example: User login

- Given a registered user is on the login page

- When they enter valid credentials and click “Sign in”

- Then they are redirected to the dashboard and see a personalized welcome message

Example: Payment processing

- Given a customer has items in their cart totaling over $0

- When they submit a payment with a valid credit card

- Then the order is confirmed, payment is captured, and a confirmation email is sent within 60 seconds

This format works best for features with clear user interactions and predictable flows. It pairs naturally with automated testing frameworks like Cucumber and SpecFlow, which parse Given/When/Then scenarios directly into executable tests.

Rule-oriented (checklist format)

The rule-oriented format lists conditions as a set of rules that the feature must satisfy. It’s more flexible than Given/When/Then and works well for features that have multiple independent conditions rather than a single linear flow.

Example: Password reset feature

- The reset link expires after 24 hours

- The new password must meet the security policy (minimum 12 characters, one uppercase, one number, one special character)

- The system sends a confirmation email after a successful password change

- Previous sessions are invalidated after the password is changed

In enterprise environments, teams often combine both formats: Given/When/Then for the primary user flows, and rule-oriented lists for edge cases, validation rules, and non-functional requirements like performance thresholds and security constraints.

Acceptance criteria for AI and machine learning projects

Standard formats assume that a feature either works or it doesn’t: the button redirects to the right page, the email is sent, the field validates correctly.

AI and ML systems operate differently. A fraud detection model doesn’t “work or not work.” It produces predictions with varying degrees of accuracy, and the acceptable threshold depends on the business context, the cost of false positives vs. false negatives, the latency budget, and the quality of the underlying data.

Writing “the model should be accurate” as an acceptance criterion is the equivalent of writing “the software should work well” for a traditional feature. It is technically a requirement but practically useless for engineering, testing, or sign-off.

Xenoss engineers use what we call the Four-Layer Acceptance Framework for AI projects. It structures acceptance criteria across four distinct layers, each with its own metrics and thresholds. This approach reflects the reality that an ML model can perform well on accuracy but fail on latency, or pass all technical benchmarks but miss the business outcome it was built to improve.

| Layer | What it measures | Example acceptance criteria |

|---|---|---|

| Business outcome | Whether the AI system delivers the business result it was designed to achieve | The churn prediction model must identify at least 70% of customers who cancel within 90 days, enabling the retention team to reduce churn by 5% quarter-over-quarter |

| Model performance | Technical metrics that evaluate the model’s prediction quality | Precision ≥ 85%, Recall ≥ 70%, F1 score ≥ 0.77 on the holdout test set. Inference latency < 200ms at the 95th percentile |

| Data quality | The integrity, freshness, and completeness of data feeding the model | Training data must contain ≥ 12 months of transaction history. No single feature may have > 5% missing values. Data refresh latency must not exceed 4 hours |

| Operational readiness | Infrastructure, monitoring, and reliability requirements for production deployment | Model serving endpoint must maintain 99.9% uptime. Drift detection alerts must fire within 1 hour of distribution shift. Rollback to previous model version must complete within 15 minutes |

Why this matters: ML acceptance criteria should be structured as progressive milestones defined by explicit evaluation metrics and threshold ranges, not binary pass/fail conditions, because “the model behaves as a learned specification derived from data” rather than a deterministic codebase.

For teams building enterprise AI systems across manufacturing, finance, or healthcare, the operational readiness layer is often the one that gets neglected. A model that performs well in a notebook but has no drift monitoring, no rollback procedure, and no latency SLA is not production-ready, no matter how good the F1 score looks.

Acceptance criteria anti-patterns that drive project failure

Understanding what good acceptance criteria look like is helpful. Understanding what bad acceptance criteria look like, and the specific damage they cause, is more useful. These are the patterns Xenoss engineers see most frequently in enterprise projects.

- The “should work correctly” criterion. Acceptance criteria like “the system should handle errors gracefully” or “the dashboard should load quickly” are untestable. They mean different things to different people, and they guarantee a dispute at sign-off. A testable alternative: “The dashboard initial load completes in under 3 seconds on a 4G connection with up to 10,000 records.”

- Implementation-disguised-as-criteria. Criteria like “Use a Redis cache for session storage” or “Implement using a microservices architecture” dictate the how instead of the what. This locks teams into specific solutions before they’ve evaluated alternatives. Acceptance criteria should describe the outcome: “Session data must be retrievable within 50ms from any application instance.” The engineering team decides whether Redis, Memcached, or another solution meets that threshold.

- Missing edge cases and negative paths. Teams often write acceptance criteria only for the happy path: the user enters valid data, the system processes it, everything works. But production systems face invalid inputs, network timeouts, concurrent requests, and malformed data constantly. Acceptance criteria should explicitly cover what happens when things go wrong: “Given the payment gateway returns a timeout, When the user retries, Then the system does not create a duplicate charge.”

- Scope-less criteria for AI models. The most common anti-pattern in machine learning projects is the open-ended accuracy target: “Improve model accuracy.” Without a threshold, a dataset boundary, and a time constraint, data science teams can iterate indefinitely, chasing marginal gains that don’t move the business needle.

As one product manager writing about ML requirements on Medium put it, the acceptance criteria for a model must include both a metric target and a time boundary:

“Decrease word error rate by 3%, but if we don’t achieve it in two weeks, we pivot to a different approach.”

Why this matters: These anti-patterns are not theoretical. 80% of software project failures stem from requirement-related issues.

Every dollar invested in improving requirements processes returns between $3.30 and $7.50 in reduced maintenance costs and rework. The most cost-effective intervention in any software or AI project is writing better acceptance criteria before a single line of code is written.

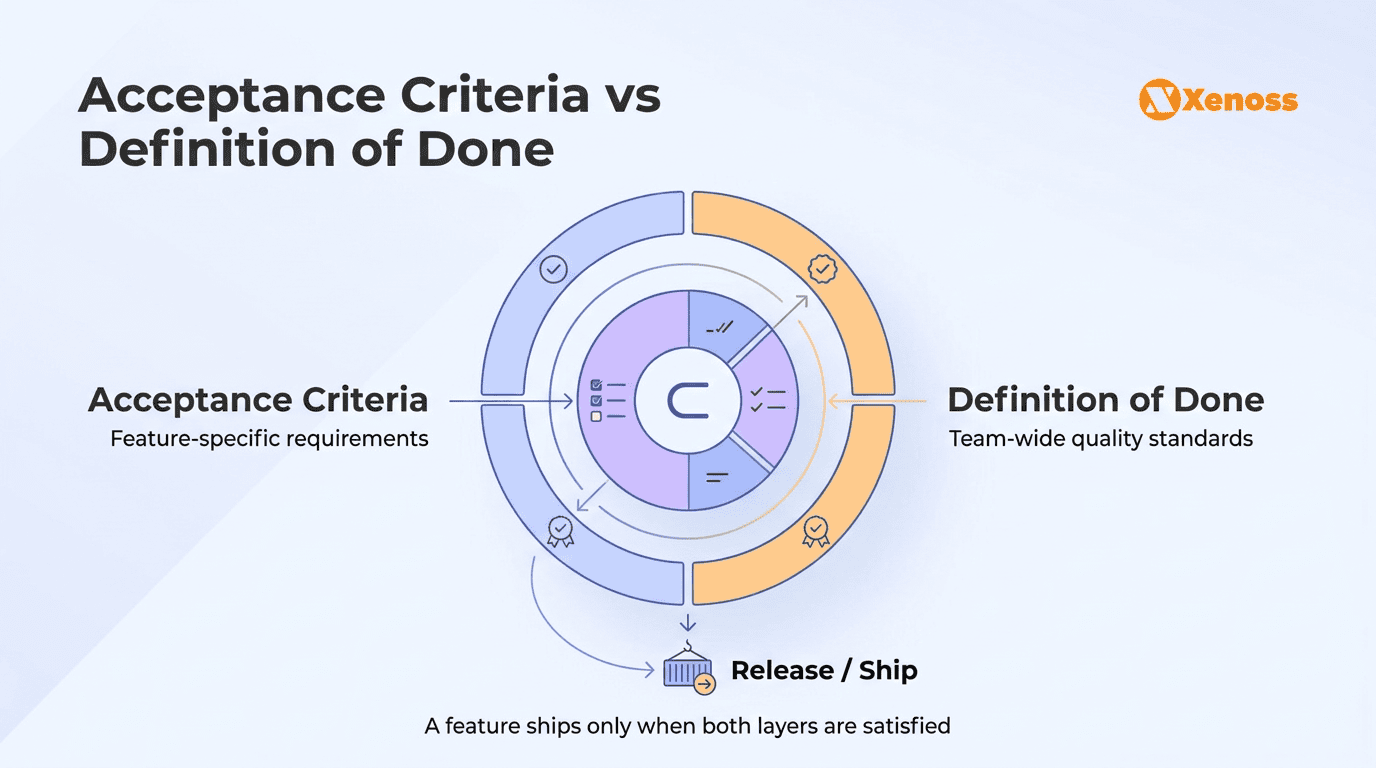

Acceptance criteria vs definition of done

These two concepts are frequently confused, but they operate at different levels. Acceptance criteria are story-specific: they define what a particular feature or user story must do to be considered complete. The definition of done is team-wide: it defines the quality gates that every work item must pass before it can be released, regardless of the feature.

A definition of done might include: code review completed, unit test coverage above 80%, documentation updated, security scan passed, and deployment to staging verified. These conditions apply to every story the team delivers. Acceptance criteria, by contrast, describe the specific behavior of the feature being built: “When the user uploads a CSV file larger than 50MB, the system displays a progress bar and completes processing within 120 seconds.”

In practice, a feature is complete when it satisfies both the story’s acceptance criteria (what this specific feature does) and the team’s definition of done (the quality bar every feature must clear). Conflating the two leads to either redundant criteria in every story or, worse, quality gates that are assumed but never verified.

Writing acceptance criteria for data pipelines and integrations

Data pipeline projects sit in a middle ground between traditional software and AI: the logic is deterministic (transformations, joins, loads), but the inputs are unpredictable (upstream schema changes, data quality degradation, volume spikes). Acceptance criteria for pipelines need to account for both.

Effective pipeline acceptance criteria cover four dimensions:

- Completeness. 100% of source records for the reporting period must be present in the destination table within 2 hours of the extraction window closing.

- Freshness. The dashboard must reflect data no older than 4 hours. Pipeline latency from source commit to warehouse availability must not exceed 90 minutes.

- Schema compliance. The pipeline must validate incoming data against the expected schema and route non-conforming records to a dead letter queue with full error context.

- Failure handling. If a source system is unavailable, the pipeline must retry 3 times with exponential backoff, then alert the on-call engineer and resume automatically when the source recovers, without producing duplicate records.

Why this matters: For organizations building data engineering infrastructure that feeds AI models, analytics dashboards, or regulatory reporting systems, vague pipeline criteria like “data should be fresh” or “pipeline should be reliable” create the same class of failures as vague software criteria. Defining specific thresholds for completeness, freshness, and failure handling turns pipeline quality from an aspiration into something the team can test, monitor, and enforce.

How AI tools help teams write and validate acceptance criteria

Requirements validation is emerging as one of the practical, low-risk applications of AI in the software development lifecycle. Rather than replacing product managers or business analysts, AI tools act as a quality layer that catches ambiguity, inconsistency, and gaps before the criteria reach the development team.

NLP-based validation of acceptance criteria in agile projects shows that machine learning models (particularly support vector machines) achieved over 60% accuracy in classifying whether acceptance criteria met quality standards. While that is not production-grade for autonomous validation, it is effective as a review assistant that flags criteria likely to cause problems.

Practical applications of AI in acceptance criteria workflows include flagging vague language (“should handle gracefully,” “should be fast”) and suggesting specific, measurable alternatives; identifying missing negative-path coverage by analyzing the story context; detecting inconsistencies between acceptance criteria within the same epic or across dependent stories; and generating draft Given/When/Then scenarios from natural language descriptions that product owners can refine.

Why this matters: According to Forrester’s analysis, organizations using AI for requirements validation experience 40 to 65% reductions in requirements-related defects. As AI-assisted development tools become standard in engineering workflows, extending that assistance to requirements quality is a logical next step, especially for teams managing complex enterprise AI projects where the cost of a requirements misunderstanding can be measured in months of wasted model training.

Bottom line

Acceptance criteria are one of the cheapest interventions in software and AI development, and one of the most consistently underinvested. The time spent writing specific, testable, threshold-based criteria before development begins pays for itself many times over in reduced rework, fewer sign-off disputes, and faster delivery cycles.

For traditional software, the Given/When/Then and rule-oriented formats remain effective and well-supported by testing frameworks. For AI and ML projects, teams need to move beyond binary pass/fail thinking and adopt layered criteria that cover business outcomes, model performance, data quality, and operational readiness. The Four-Layer Acceptance Framework gives engineering leaders and product managers a practical structure for bridging the gap between what a model can do technically and what the business needs it to deliver.

Start with the anti-patterns. Audit your current acceptance criteria for vague language, missing edge cases, implementation details disguised as requirements, and open-ended AI targets without time or metric boundaries. Fixing those alone will improve delivery predictability more than any process change or tool adoption.