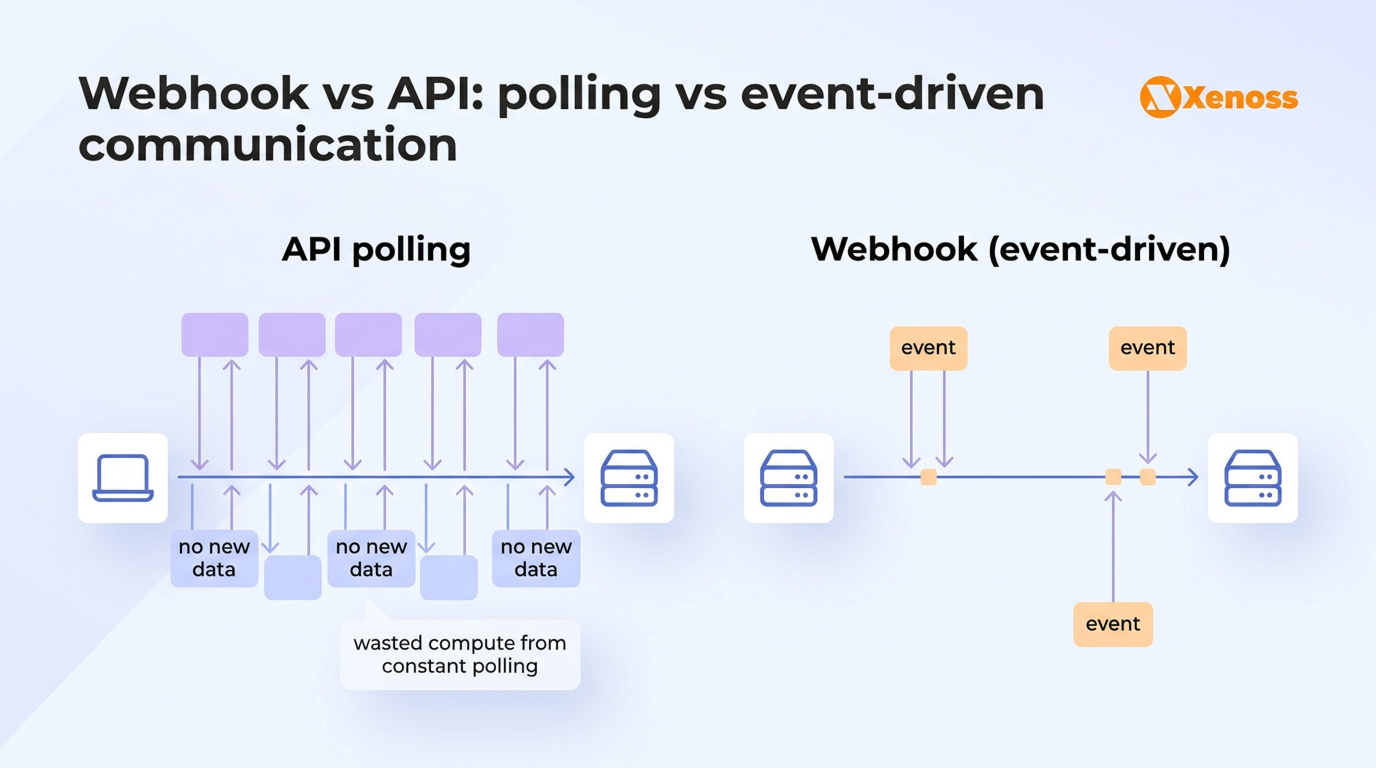

Every enterprise engineering team eventually hits the same integration question: should this system pull the data it needs, or should the source push it over when something changes? That’s the core of the webhook vs API decision, and getting it wrong leads to over-polled endpoints, missed events, bloated infrastructure bills, and integrations that crack under production load.

The stakes are higher than most comparison guides suggest. More than half of all dynamic traffic on its network is now API-related, and the share continues to grow year over year.

The shift to API-first development accelerated by 12% year over year, with the vast majority of surveyed organizations now building APIs before code. The data pipelines connecting these systems need an integration architecture that can handle both real-time event delivery and on-demand data retrieval.

73% of enterprises now manage more than 900 applications with 41% of those systems remaining unintegrated. That gap is where webhook and API architecture decisions have the most impact.

This article goes beyond basic definitions and focuses on what matters for teams building production systems: architectural trade-offs, failure modes, security surfaces, and the hybrid patterns that hold up at enterprise scale.

Summary

- APIs (pull) give the consumer full control over timing, scope, and volume of data retrieval. Webhooks (push) deliver data in near real-time but offer limited control over payload structure and delivery guarantees.

- Most enterprise integrations benefit from a hybrid approach: webhooks as event triggers, APIs for data enrichment and reconciliation. Choosing only one is rarely the right call.

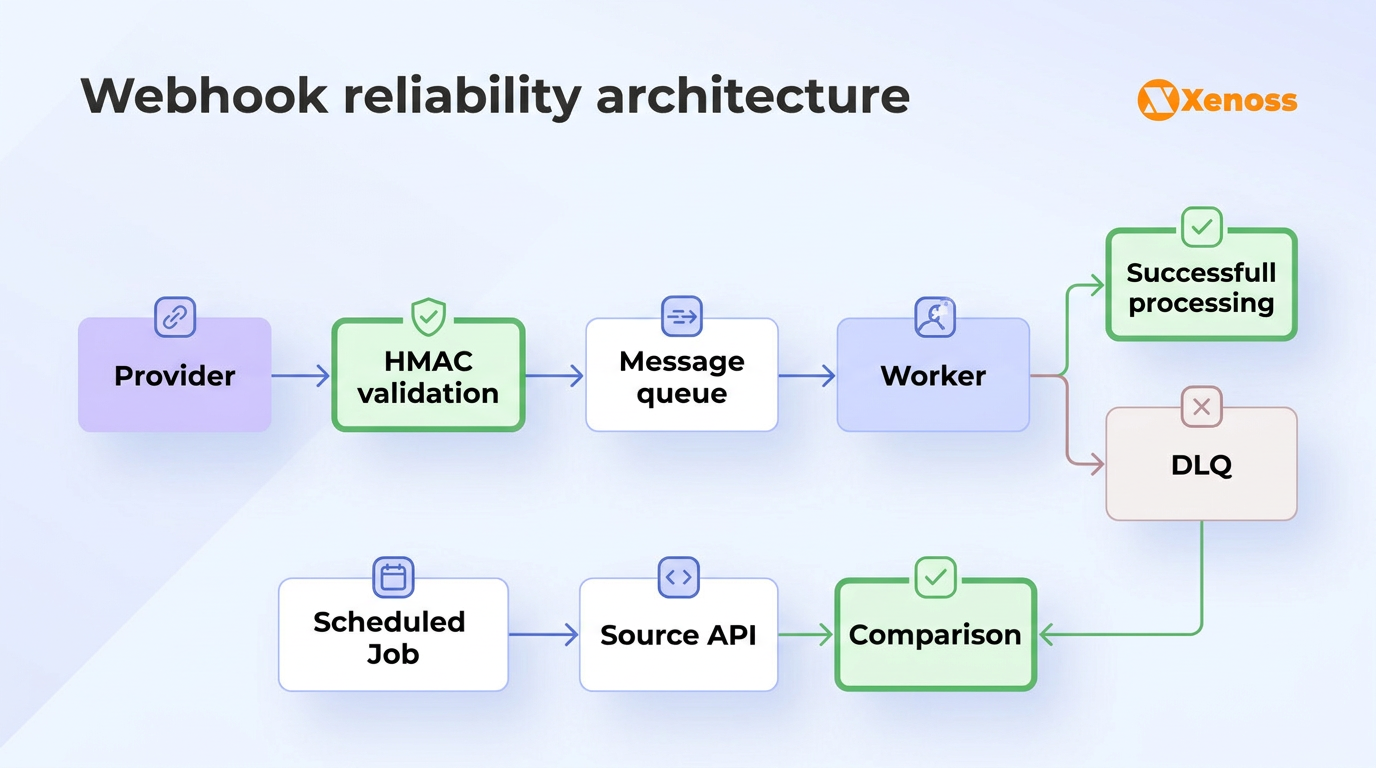

- Webhook reliability is the blind spot most teams underestimate. At-least-once delivery, duplicate events, and endpoint downtime require deliberate engineering around idempotency, dead letter queues, and scheduled reconciliation.

- With 51% of organizations already deploying AI agents that consume APIs autonomously, integration architecture decisions made today will determine how well systems handle non-human consumers tomorrow.

Webhook vs API: Key differences at enterprise scale

REST remains the dominant API style, used by 92% of organizations, but the architectural choice between pull-based APIs and push-based webhooks gets less attention. Most comparison guides stop at “pull vs. push.” That’s useful for a five-minute explainer, but it doesn’t help an engineering lead evaluate how these patterns behave under real production conditions. The table below covers the dimensions that shape architecture decisions in enterprise environments.

| Dimension | API (pull) | Webhook (push) |

|---|---|---|

| Latency | Depends on polling interval. Could be seconds or hours. | Near real-time. Fires within seconds of the triggering event. |

| Resource cost | Polling burns compute on every cycle, even when nothing changed. | Traffic only flows when events occur. Efficient at scale. |

| Reliability | Deterministic. You know immediately if a request succeeded or failed. | Best-effort in many implementations. Requires retry logic and reconciliation. |

| Data access | Full query control: filter, paginate, sort, traverse relationships. | Event payloads only. Often a compact summary, not the full record. |

| Write capability | Full CRUD. Create, update, delete records in the source system. | Read-only. Webhooks notify; they cannot push changes back. |

| Rate limit impact | High-frequency polling eats quota fast, especially across tenants. | Minimal. The provider initiates; no consumer quota consumed. |

| Debugging | Straightforward. Request in, response out, standard HTTP status codes. | Harder. Requires logging, replay tooling, and coordination with the provider. |

One dimension that most comparison guides miss entirely is debugging complexity. When an API call fails, you get an error code immediately and can trace the problem in your own logs. When a webhook event goes missing, you might not notice for hours. Reconstructing what happened requires digging through delivery logs on the provider side, checking your own ingestion queue, and verifying whether the event was received but failed downstream processing. For teams running dozens of integrations, that observability gap compounds quickly.

Why this matters: 93% of API teams face collaboration blockers, and 69% of developers now spend more than 10 hours per week on API-related work. Choosing the wrong communication pattern for a given integration makes that debugging overhead worse and compounds across every integration your team maintains.

When to use APIs for enterprise integrations

As Cloudflare CEO Matthew Prince noted in the company’s 2025 Year in Review:

“The Internet isn’t just changing, it’s being fundamentally rewired.”

For engineering teams building integration architectures, that rewiring is happening at the API layer.

Batch processing and scheduled sync. Nightly ETL jobs, hourly CRM syncs, and weekly reporting extracts all benefit from API-based patterns. You can pull large datasets during off-peak windows, paginate through results, and apply filters to avoid transferring data you don’t need. For teams managing complex data pipeline architectures, this is the bread and butter of data movement.

Complex queries and relationship traversal. If you need to join customer records with their order history, subscription status, and payment method in a single integration call, an API (especially a GraphQL endpoint) gives you that flexibility. Webhook payloads are typically flat and event-specific, which means they can’t serve as a query interface.

Write operations. Webhooks are one-way. They tell you something happened, but they can’t create a record in Salesforce, update a ticket in Jira, or push a configuration change to your infrastructure. Any integration that requires two-way data flow needs an API for the write side.

Initial data loads and migrations. When onboarding a new integration or backfilling historical data, APIs with pagination support let you ingest large datasets systematically. Webhooks only fire for future events; they can’t retroactively deliver data from before the subscription was created.

Why this matters: As API production gets faster, the pull model becomes cheaper and easier to maintain. For integrations where near-real-time speed is not critical, a straightforward API integration often costs less to operate than a webhook setup that requires queuing, idempotency logic, and failure handling.

When webhooks outperform API polling

Webhooks are the clear winner when timeliness matters more than query flexibility, and when the source system is better positioned than you are to know when data changes.

Real-time event reactions. Payment confirmations, fraud alerts, shipping updates, and inventory threshold breaches all demand immediate response. In real-time fraud detection systems, the difference between a five-minute polling interval and a three-second webhook delivery can mean the difference between blocking a fraudulent transaction and explaining to a customer why their account was drained.

Pipeline triggers. Instead of polling an upstream system every five minutes to check if new records landed, a webhook fires the moment data arrives. This is how production data engineering teams reduce ingestion latency from minutes to seconds while eliminating wasted compute on empty polling cycles.

Rate limit conservation. Most third-party APIs cap the number of requests per minute or hour. If you’re polling Shopify across 200 merchant accounts to detect new orders, you’ll burn through rate limits fast. Subscribing to the orders/create webhook lets Shopify tell you when orders come in, preserving your API quota for the calls that need it: retrieving full order details after the webhook fires.

Multi-tenant SaaS integrations. When your platform integrates with hundreds or thousands of customer accounts on a third-party service, polling each one individually is architecturally painful. Webhooks let each account push its own events to your shared ingestion endpoint, scaling linearly without multiplying your polling infrastructure.

Why this matters: Amazon’s SP-API pricing changes in 2026 illustrate the cost consequences directly. Under the new model, aggressive polling strategies that worked fine before can push applications into higher pricing tiers, multiplying costs across hundreds of seller accounts. The recommended migration path is to replace polling with webhook-style event notifications, then fall back to APIs only for enrichment.

The Trigger-Enrich-Reconcile pattern: combining webhooks and APIs

In production, almost nobody uses just one. The integration architectures that hold up at enterprise scale follow what Xenoss engineers call the Trigger-Enrich-Reconcile pattern, a three-stage approach that uses webhooks and APIs together, each for what it does best.

The pattern that shows up consistently across fintech, e-commerce, and SaaS platforms follows three stages:

- Webhook as trigger. An upstream system fires a webhook when something changes: a customer completes a purchase on Stripe, a lead is assigned in Salesforce, or a new dataset lands in an S3 bucket. Your receiving endpoint validates the HMAC signature, confirms the event structure, and drops the raw payload into a durable message queue. The endpoint returns a 200 immediately. Processing happens asynchronously, downstream.

- API for enrichment. A worker process reads from the queue and calls the source API to retrieve the full record. The Stripe webhook might include the payment ID and amount, but your order management system needs the customer profile, invoice line items, subscription tier, and discount codes. The API call fetches what the webhook payload left out.

- Scheduled API reconciliation. A nightly or hourly job compares records between systems using the API’s list and filter capabilities. This catches anything the webhook layer missed: events dropped because the endpoint was down during a deployment, duplicate deliveries that were processed twice due to a race condition, or edge cases where the provider silently failed to fire the webhook.

Why this matters: This three-layer approach gives teams the real-time responsiveness of event-driven architecture with the reliability guarantees that API-first development provides. GitHub’s webhook documentation explicitly recommends responding promptly and processing asynchronously. Stripe’s integration guides are built around the pattern of webhook notification followed by API verification. These aren’t edge cases from niche vendors. They’re the default architecture for the platforms that process the most API traffic in the world.

Webhook reliability and failure handling

APIs are predictable: you send a request, you get a response, you know what happened. Webhooks introduce a different set of failure modes that teams often discover the hard way, usually during an incident.

At-least-once delivery and duplicate events. Most webhook providers guarantee at-least-once delivery, not exactly-once. If your endpoint returns a 500 or times out, the provider will retry, sometimes multiple times. Without idempotent processing (using the provider’s delivery ID or a hash of the event to detect duplicates), the same order could be created twice in your system, the same payment could trigger two fulfillment workflows, or the same lead could get assigned to two sales reps. In financial services, duplicate processing can mean regulatory exposure.

Endpoint downtime during deployments. Every time you deploy your receiving service, there’s a window where the endpoint is unavailable. If a webhook fires during that window, it’s missed. Providers vary in how aggressively they retry and for how long. Some give you 24 hours of retries; others give you three attempts and move on. Without the reconciliation layer described above, those events are lost, and the downstream systems that depend on them start drifting out of sync.

Payload validation and schema evolution. Webhook payloads change over time as providers add fields, deprecate old ones, or alter nested structures. A rigid parser that breaks on unexpected fields will silently drop events. Defensive parsing, schema versioning, and logging of raw payloads before transformation are essential for long-lived integrations.

Dead letter queues (DLQs). When processing fails even after the event is successfully received, the event needs somewhere to go besides oblivion. A DLQ captures failed events with their full context (payload, error message, attempt count) so operators can investigate, fix the root cause, and replay the events without asking the provider to resend. For teams managing production data infrastructure, a well-configured DLQ is the difference between a quick fix and a data loss incident.

Webhook and API security best practices

API security is a well-trodden path: OAuth 2.0 or API keys for authentication, rate limiting against abuse, input validation, TLS in transit. Established patterns, mature tooling, broad platform support.

Webhook security is less standardized and requires more deliberate engineering. Your webhook endpoint is a publicly accessible URL. Anybody can send a POST request to it, and without proper validation, your system will process whatever it receives. Cloudflare’s 2025 API security findings show that a significant share of enterprise API endpoints remain unaccounted for as shadow APIs, and webhook endpoints face similar visibility challenges.

The essential security checklist for enterprise webhook integrations:

- HMAC signature verification. Providers like Stripe and GitHub sign each payload using a shared secret. Your receiver must verify this signature with a constant-time comparison before touching the event data. This is the single most important webhook security control.

- Timestamp validation. Reject payloads where the timestamp is older than a defined window (typically five minutes). This prevents replay attacks where a captured payload is resent.

- IP allowlisting. Where supported, restrict incoming traffic to the provider’s published IP ranges. GitHub, for instance, publishes its webhook delivery IP addresses.

- Idempotent processing. Because duplicate deliveries are a feature, not a bug, of at-least-once systems, your processing logic must handle re-processing the same event without side effects.

Why this matters: For organizations in regulated industries like banking or pharma, webhook security intersects directly with compliance requirements around data encryption at rest, audit logging of all received events, and data residency constraints on where payloads are stored and processed. A misconfigured webhook endpoint can turn a minor integration issue into a compliance violation.

How AI agents are changing API and webhook architecture

51% of organizations have already deployed AI agents that consume APIs autonomously, with another 35% planning to within two years. But only 24% of teams design their APIs with agent consumption in mind.

AI agents don’t browse documentation the way human developers do. They parse API schemas programmatically, reason over parameter structures, and issue requests without waiting for human confirmation. This changes the calculus for both API and webhook design.

For APIs, it means that machine-readable schemas (OpenAPI, JSON Schema), consistent error handling, and predictable response structures become even more critical. An API that’s usable by a skilled developer but confusing to a language model will become a bottleneck as enterprise AI systems scale.

For webhooks, the implication is that incoming event streams will increasingly feed ML feature stores and real-time inference pipelines rather than just triggering CRUD operations. A webhook that notifies your system about a suspicious transaction doesn’t just update a dashboard anymore. It feeds a fraud scoring model that decides, within milliseconds, whether to block the transaction. The reliability, latency, and schema stability requirements for that webhook-to-ML pipeline are an order of magnitude higher than for a notification that sends a Slack message.

Why this matters: Teams that build integration architectures today without considering machine consumers will face costly rework within two years. The 2025 Postman report also found that 93% of API teams face collaboration blockers, often rooted in scattered documentation and inconsistent schemas. Those same issues will be amplified when AI agents start consuming your APIs at machine speed and scale.

How to choose between webhooks and APIs

Before defaulting to one approach, run through these five questions. They’ll surface the constraints that matter for your specific integration.

- How fast does the downstream system need to react? Seconds = webhook. Minutes or hours = API polling is simpler and equally effective.

- Does the integration need to write data back to the source? If yes, you need an API regardless. Webhooks are read-only notifications.

- How much data does each event require? If the webhook payload gives you everything you need, great. If you need to enrich it with related records, plan for the API call after the webhook trigger.

- What happens if you miss an event? If a missed webhook means a lost sale or a compliance violation, you need the reconciliation layer (scheduled API checks) as a safety net. If it means a Slack notification arrives late, polling alone might be fine.

- Does your team have webhook infrastructure in place? Running webhook endpoints requires queue management, DLQ monitoring, idempotency logic, and deployment practices that avoid downtime gaps. If your team doesn’t have that operational muscle yet, starting with API-based polling and adding webhooks later is a pragmatic path.

Bottom line

The webhook vs API debate is a false binary. In production, the answer is almost always both: webhooks for speed, APIs for depth, and a reconciliation layer to catch what falls through the cracks.

The teams that build resilient integration architectures don’t just choose a communication pattern. They engineer around the failure modes of each one: idempotency for webhook duplicates, DLQs for processing failures, and scheduled API sweeps for missed events. As AI agents begin consuming these integrations autonomously, the bar for schema consistency, reliability, and observability will only go up.

Start with the Trigger-Enrich-Reconcile pattern. Use webhooks where speed matters, APIs where control matters, and invest in the reconciliation layer that makes the whole thing trustworthy. That’s how enterprise integrations survive contact with production.