Technical documentation is the connective tissue of every software project. It captures how systems work, why design decisions were made, and what teams need to know to build, maintain, and scale products without constant hand-holding. When done well, documentation accelerates onboarding, reduces errors, and gives engineering leaders confidence that institutional knowledge will survive personnel changes.

When done poorly, or when skipped entirely, the costs pile up fast. It is estimated that accumulated technical debt, which includes documentation debt, costs the U.S. economy $1.52 trillion per year. Engineers spend two to five working days per month dealing with tech debt, with poor documentation being a significant contributor.

What is technical documentation in software development?

Engineering teams usually work with four main categories of technical documentation.

- Process documentation records how development work gets done: workflows, coding standards, branching strategies, and operational practices. It ensures consistency, especially across distributed teams.

- Product documentation explains how the software looks and behaves from the end user’s perspective: feature guides, user manuals, tooltips, and onboarding flows.

- Code documentation lives inside or alongside the codebase: inline comments, docstrings, READMEs, and architecture decision records (ADRs) that capture the reasoning behind design choices.

- API documentation provides the specifications third-party developers or internal teams need to integrate with the product: endpoints, request/response formats, authentication flows, and error codes.

Technical documentation is the top learning resource for developers, used by 68% of respondents. GitHub remains the most popular code documentation and collaboration tool at 81%, followed by Jira at 46%. These numbers underline how central documentation is to the daily developer experience.

Technical documentation best practices for software teams

The following best practices are drawn from how high-performing engineering teams treat documentation as a first-class part of the software development lifecycle.

Define the audience and scope before writing

Every piece of documentation should answer two questions upfront:

- Who is reading it?

- What do they need to accomplish?

A deployment runbook for DevOps engineers looks nothing like a getting-started guide for a product manager. When teams skip this step, they end up with documentation that tries to serve everyone and helps no one.

A practical approach is to create lightweight audience profiles at the project level. Specify whether a document targets internal engineers, external developers, non-technical stakeholders, or end users, and calibrate the depth, terminology, and assumed knowledge accordingly.

This keeps the writing focused and prevents the bloated, unfocused documentation that teams eventually stop reading.

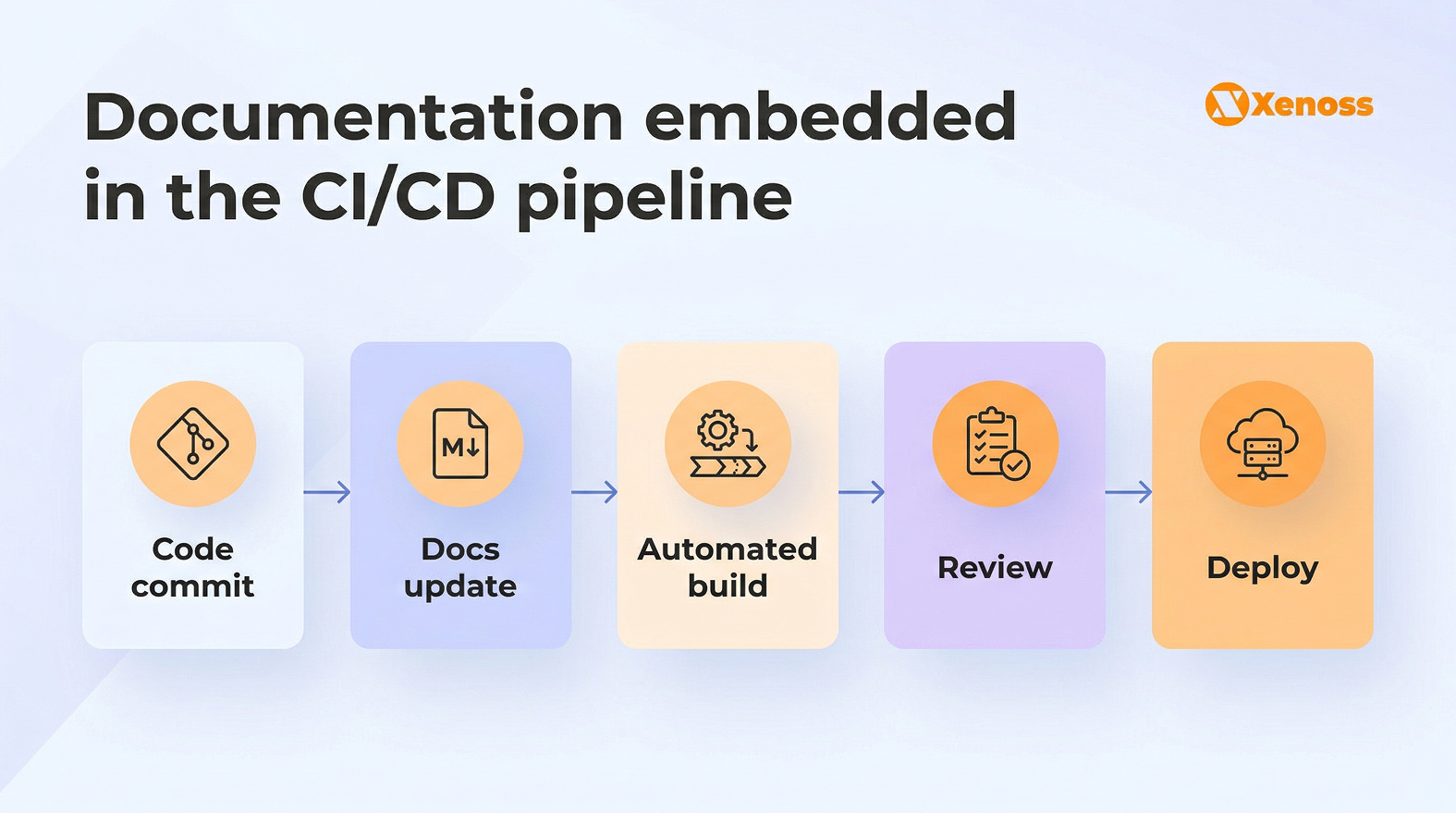

Adopt the docs-as-code approach

The docs-as-code methodology treats documentation with the same rigor as source code. Teams write docs in plain text formats (Markdown, reStructuredText, or AsciiDoc), store them in version control alongside the codebase, and use CI/CD pipelines to build, test, and deploy documentation automatically.

This approach solves one of the oldest problems in software documentation: drift. When docs live in a separate wiki or shared drive, they inevitably fall out of sync with the product. By contrast, keeping documentation in the same repository as the code means that pull requests can include both code changes and documentation updates in a single review cycle.

Adopting docs-as-code brings several tangible benefits. Engineers review documentation alongside code during pull requests, which catches inaccuracies early. Version control provides a full audit trail of what changed, when, and by whom. Automated builds ensure that broken links, formatting errors, and outdated references are flagged before deployment. And because documentation uses the same tools engineers already know (Git, Markdown, CI/CD), the barrier to contribution is low.

For teams managing complex data engineering infrastructure, docs-as-code is especially valuable. Pipeline configurations, schema definitions, and transformation logic change frequently, and documentation that can’t keep up becomes a liability rather than an asset.

Establish documentation standards and style guides

In enterprise environments, inconsistent documentation becomes a form of technical debt. When every engineer writes differently, uses different terminology, and structures documents in their own way, the result is a documentation library that feels like a patchwork rather than a coherent resource.

A documentation style guide solves this. It doesn’t need to be elaborate: a one-page reference that covers:

- naming conventions

- heading hierarchy

- how to document API endpoints

- when to include diagrams

- how to handle versioned content can make a meaningful difference

Google, for example, publishes its developer documentation style guide as an open-source resource, and Microsoft maintains a similarly comprehensive guide for its developer content.

Beyond style, teams should also standardize on templates. A consistent template for READMEs, ADRs, runbooks, and API references ensures that every document starts from a reliable baseline, reducing the cognitive load on both writers and readers.

Build documentation into the development workflow

Documentation that lives outside the development workflow tends to age badly. The best-performing teams embed documentation tasks directly into their sprint processes, treating them with the same priority as code reviews and testing.

Several practical strategies help make this work. Teams can add a “docs updated” checkbox to pull request templates so that no code ships without a documentation review.

Some organizations allocate 15% to 20% of each sprint to refactoring and documentation, a practice that mirrors the “tech debt budget” approach recommended by engineering leaders surveyed by JetSoftPro.

Others assign documentation ownership using a “you touch it, you document it” rule, where whoever modifies a module is responsible for updating its associated docs.

This matters more than ever because the cost of letting documentation slip compounds quickly. McKinsey estimates that technical debt, which includes documentation debt, can amount to up to 40% of a company’s entire technology estate. At that scale, undocumented systems become a material business risk, not just an engineering inconvenience.

Prioritize API and code documentation

API documentation is often the first touchpoint external developers have with a product, and code documentation is the first resource internal engineers reach for when onboarding or debugging. Investing in both yields outsized returns in developer productivity and integration speed.

For API docs, the OpenAPI specification (formerly Swagger) has become the industry standard. It enables teams to generate interactive documentation directly from API schemas, keeping references accurate and eliminating the manual work of updating endpoints after every release.

Tools like Redocly, SwaggerHub, and Mintlify layer on top of OpenAPI to provide customizable, searchable developer portals.

For code documentation, architecture decision records (ADRs) are a growing best practice. ADRs capture the “why” behind technical decisions, preserving context that inline comments alone can’t convey.

When a future engineer asks, “why did we use DynamoDB instead of Postgres for this service?“, a well-maintained ADR provides the answer without requiring a conversation with someone who may have already left the team.

Treat internal documentation as institutional memory

Internal documentation covers the operational knowledge teams need to run their systems: incident response playbooks, infrastructure diagrams, environment configurations, release procedures, and onboarding guides. It’s the knowledge that, when trapped in someone’s head, creates a dangerous single point of failure.

Organizations working in regulated industries, such as banking, healthcare, or manufacturing, rely on internal documentation for compliance and audit readiness. In enterprise AI deployments, documentation is critical for tracking model lineage, recording training data provenance, and maintaining reproducibility across ML experiments.

A common failure mode is scattering internal documentation across Slack threads, email chains, and personal Notion pages. The fix is to consolidate everything into a single, searchable source of truth, whether that’s an internal wiki, a dedicated documentation platform, or a Git-based knowledge base that integrates with the team’s existing tools.

AI-powered technical documentation: tools and workflows

64% of software development professionals now use AI for writing documentation. Roughly 52% of developers use AI for creating or maintaining documentation, with nearly 25% relying on it for most of their documentation work.

Writing documentation is one of the most time-consuming, repetitive tasks in software development, and it’s the first thing teams drop under deadline pressure.

AI tools reduce that friction significantly. In an internal test, IBM reported that teams using WatsonX Code Assistant reduced code documentation time by an average of 59%.

How AI transforms documentation workflows

AI-powered documentation tools are useful across several stages of the documentation lifecycle.

- Automated generation from code. AI tools analyze codebases, parse function signatures and types, and generate initial documentation drafts, including docstrings, README files, and API references. This eliminates the blank-page problem and gives writers a strong starting point to refine.

- Continuous synchronization with code changes. Platforms like Mintlify and DeepDocs integrate with Git workflows to detect code changes and automatically flag or update affected documentation. This keeps docs in sync without requiring manual tracking of which pages need revision after each release.

- AI-powered search and retrieval. Modern documentation platforms embed semantic search and conversational AI interfaces that let developers ask natural-language questions and receive contextual answers drawn from the documentation corpus. GitBook’s AI search and Mintlify’s natural-language querying are both examples of this pattern.

- Quality checks and linting. AI can scan documentation for broken links, outdated references, inconsistent terminology, and readability issues, functioning like a CI/CD linter but for prose. This automated quality layer catches problems that manual reviews often miss.

Leading AI documentation tools for software teams

The AI documentation tool landscape has matured significantly. Here are the tools that engineering teams are using to streamline documentation workflows.

| Tool | What it does | Best for | Integration |

|---|---|---|---|

| GitHub Copilot | Auto-generates docstrings, inline comments, and README content in real time while coding | Inline code documentation | VS Code, JetBrains, Neovim, GitHub |

| Mintlify | Generates structured documentation sites from codebases with AI-powered search and PR-triggered updates | API docs, developer portals | GitHub, GitLab, CI/CD pipelines |

| GitBook | Collaborative documentation platform with AI writing assistance, semantic search, and Git synchronization | Team knowledge bases | GitHub, Slack, VS Code (via Copilot) |

| DeepDocs | Scans PR diffs to detect and update outdated documentation in real time | Documentation freshness | GitHub-native |

| AWS Kiro | Agentic IDE assistant that converts tribal knowledge into structured, queryable documentation | Internal knowledge capture | AWS ecosystem, IDE-based |

While these tools are powerful, they work best as accelerators rather than replacements for human judgment. AI-generated documentation still requires engineering review to verify accuracy, fill in edge cases, and add the contextual reasoning that only someone who worked on the system can provide.

While AI adoption continues to grow, developer trust in AI output has declined from over 70% in 2023 to 60% in 2025, largely due to accuracy concerns. This makes human oversight of AI-generated content more important, not less.

How to measure and maintain documentation quality

Creating documentation is only half the challenge. Keeping it accurate, relevant, and useful over time requires deliberate governance.

Establish a documentation governance framework

Documentation governance introduces policies, workflows, and quality standards for the entire content lifecycle. At a minimum, a governance framework should define who owns documentation for each service or module, how frequently content is reviewed, what approval workflows are required for changes, and how deprecated content is archived or removed.

For organizations operating in regulated industries (banking, pharma, energy), governance is a compliance requirement. Documentation must demonstrate traceability, version control, and clear ownership to pass audits. Engineering teams that work with industrial systems, such as SCADA, IoT, and ERP integrations, need documentation that meets strict auditability standards.

Track documentation health metrics

Documentation should be measured like any other engineering deliverable. Useful metrics include:

- documentation coverage (percentage of services, APIs, and modules with up-to-date documentation)

- page freshness (time since last update relative to the most recent code change)

- search effectiveness (click-through rates, query success rates, and zero-result searches)

- user feedback scores (ratings, comments, and support ticket deflection rates).

These metrics help identify gaps before they become costly. If a critical microservice hasn’t had its documentation updated in six months while the codebase has changed significantly, that’s a concrete risk that should show up in sprint planning.

Build a feedback loop

Documentation improves when the people using it have a direct way to flag problems. Embedding feedback mechanisms, such as “Was this helpful?” widgets, inline commenting, or links to a Slack channel, turns documentation from a one-way broadcast into a conversation that surfaces gaps and inaccuracies organically.

Combining user feedback with automated monitoring (broken link detection, freshness scores, content coverage reports) creates a continuous improvement loop that keeps documentation relevant without requiring a dedicated team to review every page manually.

Technical documentation for enterprise AI and data engineering

For organizations building AI and data-intensive systems, technical documentation carries additional complexity and criticality. ML models, data pipelines, and automated workflows require documentation that goes beyond standard software specs.

Model documentation needs to capture training data sources, hyperparameter configurations, evaluation metrics, and deployment constraints. Without this, reproducing or debugging model behavior becomes a guessing game.

Data pipeline documentation should map data lineage from source to destination, including transformation logic, scheduling dependencies, and failure handling procedures. Infrastructure documentation for cloud and hybrid environments must cover resource provisioning, scaling policies, and disaster recovery protocols.

Bottom line

Technical documentation is one of the highest-leverage investments a software team can make. It reduces onboarding time, prevents knowledge loss, and creates the foundation for scaling engineering organizations without losing quality or velocity.

The best practices that matter most are straightforward: define your audience, adopt docs-as-code workflows, standardize formats, embed documentation in the development process, and invest in API and internal documentation. AI-powered tools are making it easier than ever to generate, maintain, and search documentation at scale, but they work best when combined with clear governance and human oversight.

For engineering teams working on complex data and AI systems, documentation is even more critical. It’s the difference between systems that can scale, adapt, and hand off cleanly, and systems that only the original builders can understand.