Real-time analytics still faces the same problem it did a decade ago: the business wants answers now, but it also expects those answers to be complete, correct, and reproducible.

Lambda architecture was designed to solve exactly that tension by running batch and stream processing in parallel, then merging both outputs in a serving layer.

Nathan Marz introduced the pattern around 2011 while working at Twitter, where the challenge was delivering fast views of live data without giving up the accuracy of large-scale historical computation. The design worked, and for years, Lambda became the default answer whenever teams needed both low latency and batch-grade correctness.

What changed is the cost of maintaining it. Running two separate pipelines, one for batch and one for streaming, means duplicating logic, testing, and operational ownership. That pain triggered the push toward Kappa architecture, after Jay Kreps argued in 2014 that mature stream processors could replace the batch layer entirely. Since then, medallion architecture has emerged as another way to structure the same problem, especially in lakehouse environments, though even medallion patterns are now being pushed toward real-time operation as latency expectations tighten.

This article compares Lambda, Kappa, and medallion architecture as competing ways to balance correctness, latency, cost, and maintainability in modern analytics systems.

Summary

- Lambda architecture separates data processing into a batch layer (accurate, high-latency), a speed layer (approximate, low-latency), and a serving layer that merges both views for queries.

- Kappa architecture eliminates the batch layer by treating all data as a stream. It relies on a replayable log (Kafka) and a streaming engine (Flink) to handle both real-time and historical reprocessing through one codebase.

- Medallion architecture (bronze/silver/gold) organizes data by quality tier rather than processing mode. It has become the default for lakehouse environments built on Databricks or Snowflake.

- The right choice depends on your data workload. Lambda is strongest for IoT, fraud detection, and scenarios requiring both deep historical recomputation and sub-second latency. Kappa is simpler when your batch and streaming logic are identical. Medallion fits analytics-first environments with structured governance needs.

What is Lambda architecture?

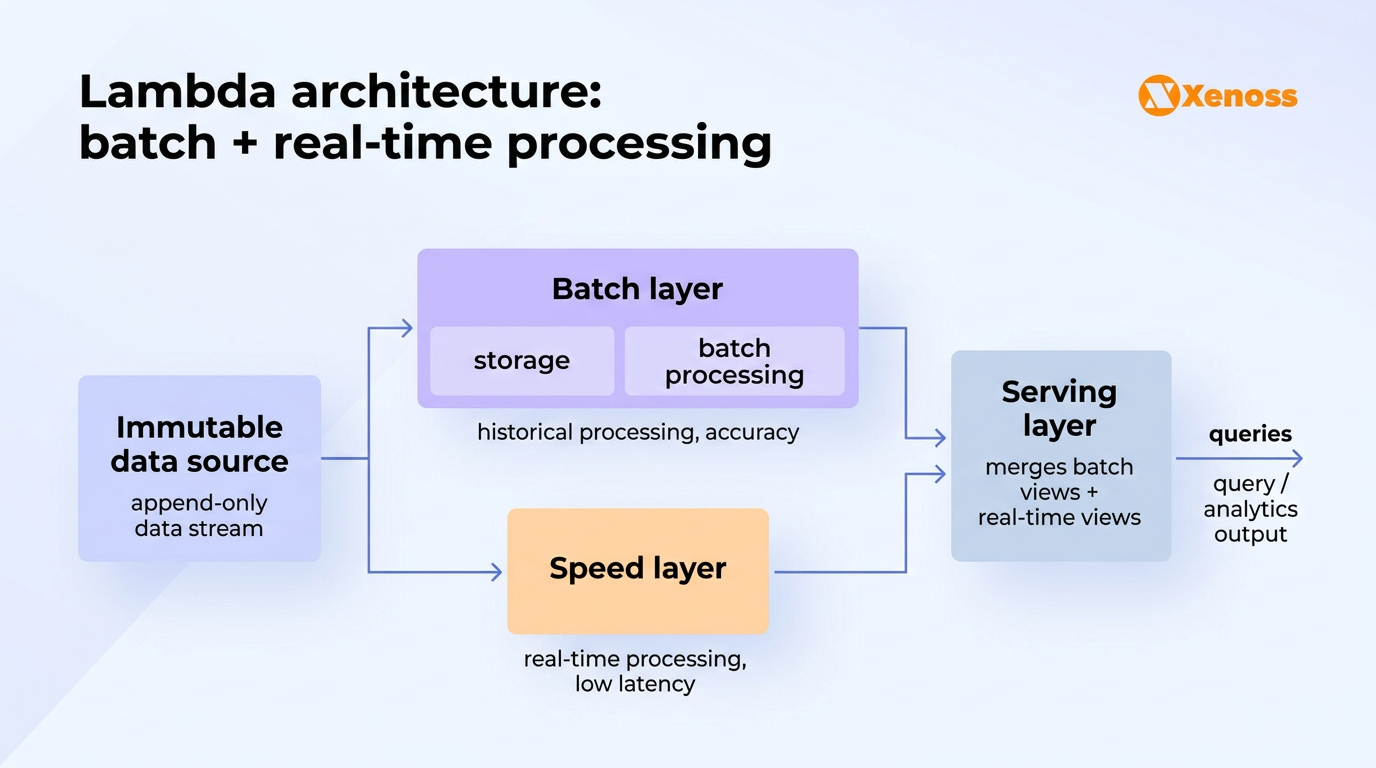

The architecture is built on an append-only, immutable master dataset that serves as the system of record. All incoming data is written to this dataset and simultaneously routed to both a batch layer and a speed layer for processing.

The core idea: batch processing gives you complete, accurate views of your data but takes time. Stream processing gives you immediate results but may sacrifice some accuracy.

Lambda runs both and lets a serving layer merge the outputs so users always see the best available answer. Once the batch layer finishes processing a given time window, its authoritative result replaces the speed layer’s approximation.

The three layers of Lambda architecture

Batch layer

The batch layer stores the complete master dataset and precomputes views by running functions across all historical data at scheduled intervals. Because it reprocesses everything from scratch each cycle, it can correct errors and produce fully accurate results.

The trade-off is latency: batch runs can take minutes to hours, depending on data volume. Common tools include Apache Spark, Apache Hadoop MapReduce, and cloud warehouses like Snowflake or BigQuery.

In modern implementations, the master dataset is typically stored on S3, ADLS, or GCS in Parquet format, often managed by an open table format like Apache Iceberg or Delta Lake for ACID compliance and time travel.

Speed layer (real-time processing)

The speed layer processes incoming data streams with minimal delay, filling the gap between batch runs. It handles only recent data and produces incremental views that are valid until the batch layer catches up. This layer prioritizes latency over completeness.

Apache Flink has become the de facto standard for this role. Over 2,300 companies globally use Flink for stream processing, including Apple, Netflix, Uber, Stripe, LinkedIn, and Shopify.

Apache Kafka Streams and Spark Structured Streaming are common alternatives, though Spark’s micro-batch approach introduces higher latency than Flink’s true event-at-a-time processing.

Serving layer

The serving layer indexes and exposes the precomputed batch views and real-time views so downstream applications can query them. It merges results from both layers, prioritizing batch views when available and falling back to speed layer views for the most recent time window. Technologies used here include Elasticsearch, Apache Druid, Apache Cassandra, and cloud-native query engines like Amazon Athena or Snowflake.

The serving layer is where Lambda earns its value: users get a single query interface that returns accurate historical data and near-real-time recent data without needing to understand the underlying processing model.

Lambda vs Kappa vs medallion architecture

| Lambda | Kappa | Medallion | |

|---|---|---|---|

| Processing model | Parallel batch + stream | Stream-only (replayable log) | Quality tiers (bronze/silver/gold) |

| Codebases | Two (batch logic + streaming logic) | One (same code for real-time and replay) | One (ETL/ELT between tiers) |

| Latency | Sub-second (speed layer) + hours (batch) | Sub-second to seconds | Minutes to hours (batch ETL between tiers) |

| Reprocessing | Full recompute from master dataset | Replay from Kafka log | Reprocess between tiers |

| Primary tools | Spark (batch) + Flink/Kafka (stream) | Kafka + Flink | Spark/dbt + Delta Lake/Iceberg |

| Operational complexity | High (two systems to maintain) | Medium (one pipeline, complex engine) | Low to medium (single platform) |

| Best for | IoT, fraud detection, mixed historical + real-time workloads | Event-driven systems, CDC pipelines, same logic for batch and stream | Analytics, BI, ML feature engineering in lakehouse environments |

| Weakness | Dual codebase maintenance | Complex reprocessing at large scale | Not designed for sub-second latency |

When Lambda is the right call

Lambda makes sense when your batch processing logic and streaming logic are fundamentally different. A fraud detection system, for example, might run a lightweight rule engine in the speed layer for instant alerts while the batch layer trains and evaluates ML models overnight on the full transaction history.

An IoT analytics platform might stream sensor readings for real-time dashboard updates while running complex multi-day trend analysis in batch. If the two processing paths serve different purposes and produce different outputs, Lambda’s separation is architecturally justified.

The problem: duplicated logic at scale

Lambda’s core issue is operational.

Every transformation must be implemented twice:

- once in batch

- once in streaming

Over time, these pipelines drift:

- logic diverges

- bugs appear in one layer but not the other

- validation becomes increasingly complex

Practical example

A retail analytics system might:

- use batch processing to compute daily revenue across all stores

- use streaming to update intraday sales metrics

If pricing logic changes, both pipelines must be updated and validated. Any inconsistency leads to conflicting metrics across dashboards.

This duplication is what drives many teams away from Lambda.

When Kappa replaces Lambda

Kappa wins when your batch and streaming logic are the same. If you are doing identical filters, joins, and aggregations regardless of whether the data is historical or current, maintaining two implementations is overhead with no upside.

Jay Kreps’ original argument was exactly this: a replayable log (Kafka) plus a powerful streaming engine (Flink) can handle both real-time processing and full historical reprocessing through the same code. LinkedIn moved from Lambda to a unified streaming architecture for precisely this reason.

The streaming ecosystem has matured significantly since Kreps wrote that critique. Confluent shifted its strategic focus from ksqlDB to Apache Flink as the stream processing standard, and Flink’s commercial adoption grew 70% quarter over quarter through 2025. For CDC-based pipelines that stream database changes to analytics destinations, Kappa is now the natural default.

When medallion architecture is the better fit

Medallion architecture organizes data by quality tier: bronze (raw, as-ingested), silver (cleaned, deduplicated), gold (business-ready, aggregated). It does not separate batch from stream processing. Instead, it separates raw data from progressively refined data, with ETL or ELT jobs moving data between tiers.

This pattern dominates lakehouse environments. Databricks popularized it, and the 2026 State of Data Engineering survey of 1,101 data professionals found that 27% now use lakehouse architectures where medallion is the standard data organization pattern.

Medallion is a better fit when the primary consumers are analysts and data scientists who need governed, trustworthy data at different stages of refinement, and sub-second latency is not a requirement.

Why this matters: Choosing the wrong pattern has lasting consequences. Migrating from Lambda to Kappa means rewriting your batch processing into streaming jobs and restructuring how you handle reprocessing.

Moving from Lambda to medallion means rethinking your entire data organization model. These are multi-month migration projects. Getting the pattern right upfront avoids expensive rewrites later.

How modern tools addressed Lambda’s biggest problems

Lambda architecture caught legitimate criticism for two specific issues: code duplication and operational complexity. Both were real problems in 2011-2014. Both are significantly less painful now.

The code duplication problem

The original critique: you write the same aggregation logic twice, once for Hadoop MapReduce and once for Storm. Two different languages, two different programming models, two different failure modes. Keeping them in sync was a nightmare.

Modern tools have largely solved this. Apache Spark unified batch and streaming under a single API (Structured Streaming), and Apache Flink processes both bounded and unbounded datasets through the same DataStream API. You can write one function and run it in either mode. Apache Beam takes this a step further by providing a single programming model that can execute on Spark, Flink, or Google Dataflow, depending on the runner you configure.

That said, “write once, run everywhere” is cleaner in theory than in practice. Performance tuning, state management, and windowing logic often differ enough between batch and streaming contexts that teams end up with specialized code paths regardless. The tools reduced the duplication, but they did not eliminate the architectural decision to run two systems.

The operational complexity problem

Running Hadoop, Storm, and a serving database was expensive in human time and infrastructure cost. Cloud-managed services have changed the equation. AWS offers Kinesis for streaming, EMR for batch, Athena for serving, and Glue for orchestration, all as managed services. Azure provides Event Hubs, HDInsight, and Synapse Analytics. GCP offers Pub/Sub, Dataflow (Flink-based), and BigQuery. The ops burden of Lambda architecture has dropped substantially when you do not have to manage the clusters yourself.

Implementing Lambda architecture on cloud platforms

Each major cloud provider offers services that map cleanly to Lambda’s three layers. The specific service choices depend on your data volume, latency requirements, and team expertise.

The AWS implementation is the most common in enterprise deployments. A typical setup routes incoming events to Kinesis, which splits the stream into S3 for batch processing (via Spark on EMR) and a Flink application for real-time aggregation. Both paths write to a serving layer where Athena or Redshift handles queries. AWS’s own Lambda architecture whitepaper provides a reference implementation using this stack.

When to use Lambda architecture in 2026

Lambda architecture makes the most sense under specific conditions. Here are the scenarios where it earns its operational overhead.

Fraud detection and financial compliance. Banks need sub-second transaction scoring (speed layer) and overnight model retraining on the full transaction history (batch layer). The two workloads are fundamentally different: one runs inference, the other runs training. Lambda’s separation maps directly to this split.

IoT analytics and industrial monitoring. Sensor data from manufacturing equipment, oil platforms, or fleet vehicles needs real-time alerting (temperature spikes, pressure anomalies) and long-range trend analysis (equipment degradation over months). The speed layer handles alerting; the batch layer handles predictive maintenance models trained on months of history. Custom models trained on your specific sensor data and operating conditions consistently outperform generic platform offerings for these workloads by 30-50% on prediction accuracy.

Recommendation engines. E-commerce and content platforms use batch-computed collaborative filtering models (trained overnight on full user history) combined with real-time session-based personalization (speed layer adjusts recommendations based on what the user is doing right now).

Log analytics and security monitoring. Security teams need real-time alerting on suspicious patterns (speed layer) while also running retrospective analysis across weeks of logs to detect slow-burn attacks (batch layer).

If your use case does not involve fundamentally different processing logic for batch and stream, or if sub-second latency is not required, consider Kappa or medallion instead. Simpler architectures cost less to build and maintain.

Bottom line

Lambda architecture solved a genuine problem in 2011: streaming engines were immature, batch was accurate but slow, and you needed both. The pattern of running parallel processing paths and merging results in a serving layer remains valid for specific workloads, particularly those where batch and stream processing serve different analytical purposes.

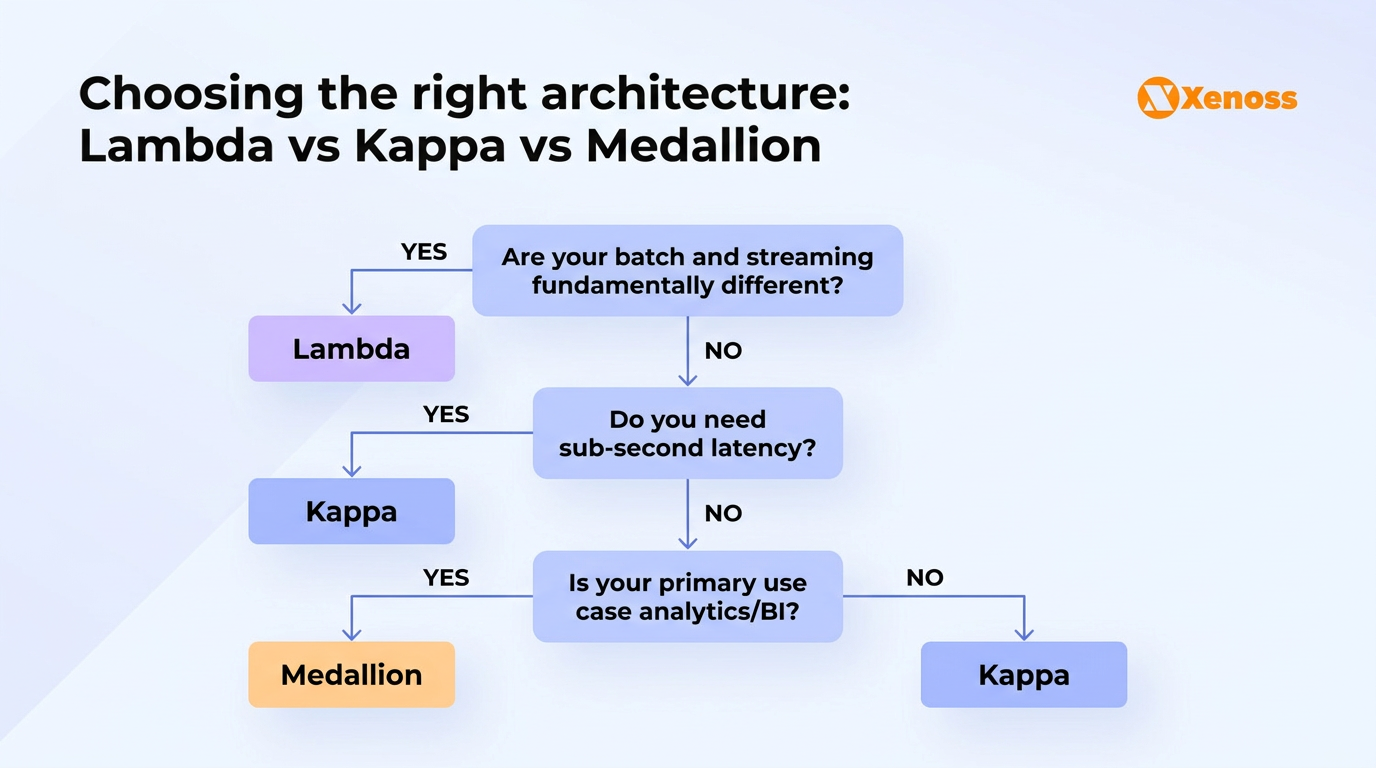

What has changed is the competitive landscape of alternatives. Kappa architecture, powered by Kafka and Flink, eliminates the dual-codebase problem when your batch and streaming logic are the same. Medallion architecture, native to lakehouse platforms, offers a simpler model for analytics-first environments. Choosing between them comes down to one question: are your batch and streaming workloads fundamentally different, or are they the same logic applied to different time windows? If different, Lambda. If the same, Kappa. If analytics-first without real-time requirements, medallion.

For industrial and enterprise environments where real-time monitoring needs to coexist with deep historical analysis, including fraud detection, IoT sensor networks, and financial compliance, Lambda’s separation of concerns remains the right architectural bet. The tools have gotten better. The operational burden has dropped. The pattern holds.