In October 2025, Fivetran and dbt Labs signed a merger agreement, combining the two most widely adopted tools in modern data pipelines into a single company approaching $600 million in annual recurring revenue.

The deal was a signal: the era of modular, best-of-breed ETL tooling is consolidating into platform plays. Snowflake, Databricks, and Microsoft Fabric are all building full-stack data platforms. The dbt-Fivetran merger is the independent ecosystem’s answer to that trend.

For data engineering teams, this consolidation changes how you think about building ETL pipelines. The fundamental pattern (extract data from sources, transform it, load it into a destination) has not changed. But the tooling landscape, the build-vs-buy calculus, and the architectural expectations around real-time processing and AI workloads have shifted significantly.

This article covers how ETL pipelines work, the differences between ETL, ELT, and real-time streaming, how the tooling landscape has changed, and where off-the-shelf platforms stop and custom data engineering starts.

Summary

- An ETL pipeline extracts data from source systems, transforms it (cleaning, enriching, aggregating), and loads it into a destination like a data warehouse or data lake. It is the foundation of every analytics and AI data infrastructure.

- ELT has replaced ETL as the default for cloud-native teams. Cloud warehouses like Snowflake and BigQuery are powerful enough to handle transformation after loading, which simplifies pipeline architecture and reduces latency.

- The dbt-Fivetran merger is reshaping the tooling landscape. The combined company controls both ingestion and transformation, pushing the industry toward integrated platforms and reducing the number of independent choices available to data teams.

- Custom ETL pipelines outperform platforms for mission-critical data flows involving proprietary APIs, complex business rules, real-time streaming requirements, and regulatory compliance logic that no pre-built connector handles.

How an ETL pipeline works

An ETL pipeline moves data through three stages.

Extract pulls raw data from source systems: databases, SaaS applications, APIs, file systems, IoT sensors, or event streams. The extraction can be full (pulling all records each time) or incremental (pulling only records that changed since the last run).

Transform cleans, validates, enriches, and reshapes the extracted data to match the destination schema and business requirements. This includes data type conversions, deduplication, null handling, joins across sources, and applying business rules.

Load writes the transformed data to the destination system, typically a data warehouse, data lake, or operational database.

The order matters. In traditional ETL, data is transformed before it reaches the destination. This was necessary when destination systems (on-premises warehouses) had limited compute power. Modern cloud warehouses flipped this model, leading to the rise of ELT.

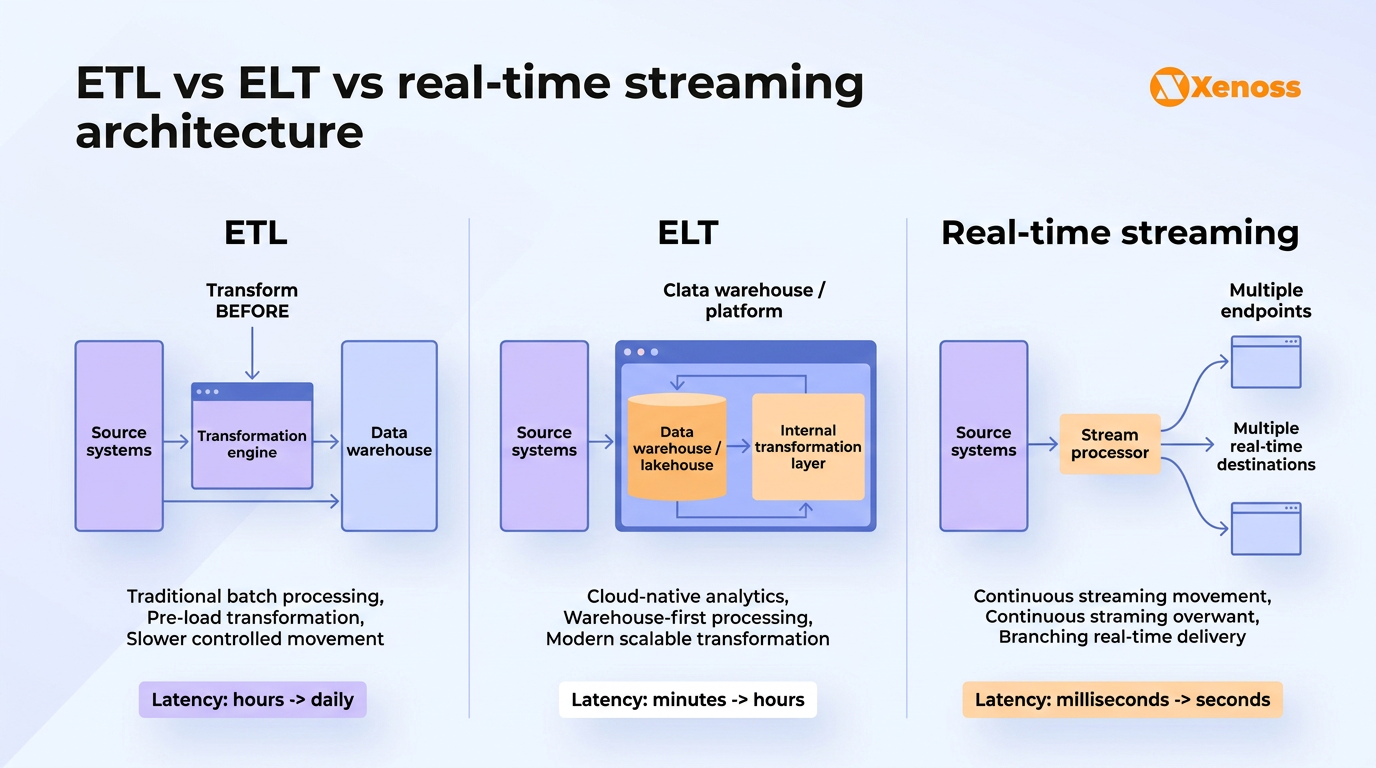

ETL vs ELT vs real-time streaming: Choosing the right pattern

Most ETL guides compare ETL and ELT. Few include real-time streaming as a third option even though the 2026 State of Data Engineering survey shows 27% of data professionals now use lakehouse architectures where streaming and batch coexist.

| ETL | ELT | Real-time streaming | |

|---|---|---|---|

| Transform location | Before loading (staging area or ETL server) | After loading (inside the warehouse) | In-flight (stream processor) |

| Latency | Minutes to hours (batch) | Minutes (batch, faster than ETL) | Seconds to milliseconds |

| Compute model | Dedicated ETL server or cluster | Warehouse compute (Snowflake, BigQuery, Redshift) | Stream processor (Flink, Kafka Streams) |

| Best for | Legacy systems, on-prem warehouses, heavy pre-processing | Cloud-native analytics, modern data stacks | Fraud detection, IoT monitoring, real-time dashboards |

| Typical tools | Informatica, Talend, SSIS, custom scripts | Fivetran + dbt, Airbyte + dbt, Stitch | Kafka + Flink, Spark Structured Streaming, Kinesis |

| Data freshness | Hours to daily | Minutes to hourly | Seconds |

| Complexity | Medium (dedicated infra) | Low (warehouse handles compute) | High (stateful processing, exactly-once semantics) |

| Cost driver | ETL server compute | Warehouse compute credits | Streaming infrastructure + state management |

For most analytics use cases, ELT is the default in 2026. Cloud warehouses are powerful enough to handle transformation at query time or through scheduled dbt jobs.

ETL still makes sense when you need to reduce data volume before loading (cost optimization for high-volume sources), when transformations require logic that SQL cannot express, or when the destination is a legacy system with limited compute.

Real-time streaming is necessary when the business requires sub-second data freshness, which applies to fraud detection, IoT analytics, real-time pricing, and operational alerting.

The ETL tooling landscape after the dbt-Fivetran merger

The dbt-Fivetran merger is the biggest structural change in ETL tooling since Snowflake popularized ELT. The combined company controls both data ingestion (Fivetran’s 500+ pre-built connectors) and transformation (dbt’s SQL-based modeling framework).

For data teams, this means tighter integration: dbt Cloud jobs now trigger automatically when Fivetran syncs complete, pipeline monitoring is consolidated into a single dashboard, and metadata flows between ingestion and transformation without custom glue code.

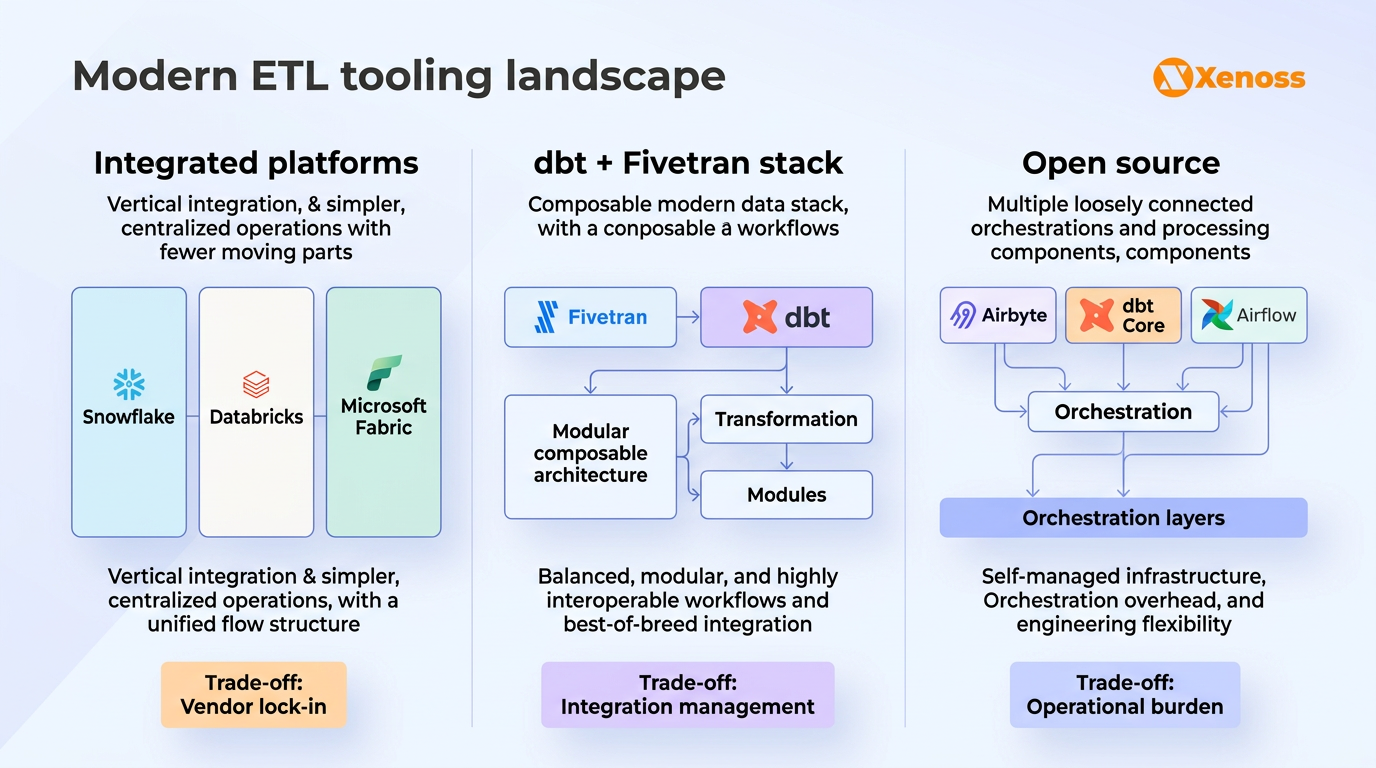

The broader tooling landscape now breaks down into three categories.

Integrated platforms. Snowflake, Databricks, and Microsoft Fabric each offer ingestion, transformation, storage, and analytics within a single ecosystem. Databricks’ Delta Live Tables handles both ETL and data quality monitoring. Snowflake’s Dynamic Tables automate incremental transformation. Fabric bundles Data Factory, Synapse, and Power BI. These platforms reduce operational overhead but create vendor lock-in.

The dbt-Fivetran stack. The merged company positions itself as “open data infrastructure” that works across any warehouse or compute engine. The combined approach: Fivetran extracts and loads, dbt transforms, and the platform stays warehouse-agnostic.

The trade-off: bundled pricing may reduce negotiating leverage, and the community has raised concerns about dbt Core receiving less innovation than dbt Cloud.

Open-source and modular stacks. Airbyte (extraction), dbt Core (transformation), and orchestration via Airflow, Dagster, or Prefect offer maximum flexibility and no licensing cost.

The trade-off is operational burden: teams must maintain connectors, manage upgrades, and handle infrastructure scaling themselves. For teams with strong data engineering capabilities, this remains a strong option.

Why this matters: The consolidation trend means fewer independent choices for data teams. If your architecture depends on Fivetran and dbt, you now have a single vendor controlling both ingestion and transformation. Teams that value vendor independence should evaluate open-source alternatives (Airbyte for EL, dbt Core for T) and design architectures where each layer can be swapped without rewriting everything else.

Where ETL platforms stop and custom pipelines start

Fivetran’s 500+ connectors and dbt’s SQL-based transformation framework cover a wide range of standard use cases. But “standard” is the keyword. Enterprise data environments are rarely standard across the board.

Custom ETL pipelines consistently outperform platforms in four specific scenarios.

Proprietary APIs and undocumented data sources. Every enterprise has systems that no pre-built connector supports: legacy ERP instances with custom schemas, internal APIs with proprietary authentication, industry-specific data formats (HL7 in healthcare, FIX protocol in finance, OPC-UA in manufacturing). When your critical source system is not in the connector catalog, you are back to writing custom extraction logic. The difference is whether you do it within a framework designed for reliability and monitoring, or as a one-off script that nobody maintains.

Complex business rules that SQL cannot express. dbt’s power comes from SQL. But not all transformation logic fits into SQL. Geospatial calculations on logistics data, ML feature engineering with windowed aggregations across multiple time horizons, statistical anomaly detection on financial transactions, or recursive graph traversals for supply chain dependency mapping all require Python, Spark, or custom code. When transformation logic exceeds what SQL can express, custom data pipelines become necessary.

Real-time streaming with exactly-once guarantees. ELT is inherently batch-oriented: extract, load, transform on a schedule. When the business requires sub-second data freshness with exactly-once processing semantics (fraud detection, real-time pricing, operational alerting), the architecture needs to shift from ELT to streaming. Apache Flink and Kafka Streams handle this, but integrating them into an enterprise data architecture alongside batch pipelines is a custom engineering effort that no ELT platform automates.

Regulatory compliance logic embedded in the pipeline. Healthcare organizations must enforce HIPAA de-identification rules during transformation. Financial institutions must apply KYC and AML screening logic before data reaches the analytics layer. GDPR-compliant AI systems require documented data lineage and PII handling at every transformation step. These are not “nice to have” configuration options. They are regulatory requirements that must be embedded in the transformation logic itself, tested, and auditable.

Why this matters: The build vs. buy analysis for ETL pipelines comes down to one question: is your data flow standard or mission-critical? For standard flows (SaaS application data into a warehouse for BI), platforms handle it well and the engineering time is not worth the overhead.

For mission-critical flows (real-time fraud scoring, regulatory compliance, proprietary data sources), custom pipelines deliver reliability, performance, and compliance that platforms cannot match. Most enterprise environments need both.

ETL pipelines for AI and ML workloads

Traditional ETL was designed to move data into warehouses for BI and reporting. AI workloads place different demands on the pipeline. ML models do not consume clean, aggregated tables. They consume features: engineered, versioned, point-in-time correct datasets that feed training and inference pipelines.

Three ETL patterns specific to AI workloads deserve attention.

Feature pipelines. Feature engineering (computing the inputs that ML models consume) is a transformation step, but it looks different from traditional ETL transformation. Features require windowed aggregations (average transaction amount over the last 30 days), cross-table joins with temporal constraints (customer attributes as of the prediction date, not as of today), and versioning (which feature definitions produced which model version). Feature stores like Feast, Tecton, and Databricks Feature Store centralize this, but the pipeline feeding the feature store is a custom ETL job tailored to your model’s specific input requirements.

Training data preparation. ML training data needs specific handling that standard ETL does not provide: stratified sampling to balance classes, data augmentation for underrepresented categories, train/test/validation splitting with temporal awareness (no future data leaking into the training set), and snapshot versioning so experiments can be reproduced. These are transformation steps that belong in the ETL pipeline but require ML-specific engineering, not SQL-based dbt models.

Inference data pipelines. Once a model is in production, it needs fresh input data at prediction time. For batch inference, this might be a nightly ETL job that prepares features for the next day’s predictions. For real-time inference, it is a streaming pipeline that computes features on the fly and serves them to the model endpoint with millisecond latency. The architecture of this pipeline directly affects model accuracy and latency, and it rarely maps to a standard ELT workflow.

Bottom line

The ETL pipeline pattern (extract, transform, load) is one of the oldest concepts in data engineering, and also one of the most actively evolving. ELT has replaced ETL as the default for cloud-native analytics. The dbt-Fivetran merger is consolidating the tooling landscape into integrated platforms. Real-time streaming is expanding the scope of what “pipeline” means beyond batch processing.

For data engineering teams, the practical question is not which pattern to use in the abstract, but which combination fits your architecture. Most enterprise environments run all three: ELT for standard analytics data, custom ETL for legacy and proprietary sources, and streaming for real-time use cases. The 2026 State of Data Engineering survey confirms this: 44% still use cloud warehouses (ELT), 27% use lakehouses (often mixing batch and streaming), and 25% cite legacy systems as their biggest bottleneck, which is exactly where custom pipeline engineering delivers the most value.

Platforms handle the standard flows. Custom engineering handles the critical ones. The organizations that get ETL right are the ones that know which is which.