Here is a number that should bother every supply chain executive: only 23% of supply chain organizations have a formal AI strategy, according to a Gartner survey of 120 supply chain leaders who had deployed AI in the past 12 months. The rest are investing project by project, without a defined roadmap. Gartner’s own term for the result: “franken-systems,” complex, layered architectures that do not talk to each other and cost more to maintain than they save.

The irony is that supply chain optimization is one of the areas where AI delivers the clearest returns. Shell monitors 10,000+ pieces of equipment using ML models that process 20 billion rows of data weekly and cut maintenance costs by 20%.

UPS estimates that eliminating a single mile per driver per day saves $50 million a year. Maersk uses AI to calculate fuel-efficient shipping routes in real time. The technology works. The problem is how organizations implement it.

This article covers where AI delivers the biggest supply chain cost reductions, what separates implementations that work from those that don’t, and why off-the-shelf tools consistently fall short for mission-critical logistics operations.

Summary

- AI supply chain optimization delivers measurable cost reductions in demand forecasting (up to 75% accuracy improvement), inventory management (25% reduction), and transportation (30% cost cut), according to industry benchmarks.

- Most supply chain AI initiatives lack strategic direction. Only 23% of organizations that have deployed AI have a formal strategy. The rest build disconnected, project-by-project solutions that add complexity without compounding value.

- Off-the-shelf platforms hit ceilings on domain-specific problems. Proprietary APIs, equipment-specific failure modes, SCADA/IoT integration, and edge deployment requirements consistently exceed what generic tools can handle.

- Custom AI solutions outperform generic tools on mission-critical flows by 30-50% on prediction accuracy when trained on your sensor data, maintenance history, and operating conditions.

Where AI delivers the biggest supply chain cost reductions

Supply chain optimization covers a wide territory, from raw material procurement to last-mile delivery. But AI does not deliver equal value everywhere. The highest-ROI applications cluster around three areas where the gap between human decision-making and machine capability is widest.

Demand forecasting and predictive analytics

Traditional demand forecasting relies on historical sales data, seasonal adjustments, and a healthy dose of manual override. The models are backward-looking and brittle. When conditions shift rapidly (geopolitical disruptions, sudden demand spikes, raw material shortages), these models break.

ML-based forecasting pulls in signals that statistical models can’t process: weather patterns, social media trends, competitor pricing changes, macroeconomic indicators, and real-time point-of-sale data. The accuracy gains are significant.

AI-driven supply chain forecasting reduces forecast errors by 20-50%, which translates directly into fewer stockouts and lower inventory costs Gartner predicts that 70% of large organizations will adopt AI-based demand forecasting by 2030.

American Tire Distributors, for example, switched from fixed forecast intervals to dynamic AI-driven planning using ToolsGroup’s probabilistic forecasting engine. The shift let their team collaborate on demand-responsive decisions with both suppliers and retailers instead of reacting to outdated weekly projections.

Inventory optimization

Overstocking ties up working capital. Understocking loses sales. The sweet spot between the two is narrow, changes daily, and varies by SKU, location, and season. AI models optimize this tradeoff continuously, adjusting reorder points and safety stock levels based on real-time demand signals rather than static rules.

Gaviota, an automated sun protection manufacturer, deployed AI-powered inventory optimization and achieved a 43% reduction in stock levels, slashing inventory from 61 to 35 days while maintaining service level targets.

At the energy sector level, bp used AI-driven optimization to substantially reduce working capital locked in inventory, with real-time tracking improving operational cash flow projections.

Route optimization and transportation costs

Transportation is often the single largest line item in supply chain costs. AI-powered route optimization considers variables that human planners cannot process simultaneously: traffic conditions, weather, delivery windows, vehicle capacity, fuel prices, driver schedules, and real-time disruption events.

DHL’s optimization engine analyzes 58 different parameters to determine delivery routes, delivering a 15% reduction in vehicle miles and a 10% decrease in carbon emissions.

UPS’s ORION system produces route savings at a scale where a single mile per driver per day translates to $50 million in annual savings.

Maersk uses AI to optimize container loading, route planning, and scheduling, factoring in real-time weather data for fuel-efficient routing.

Why this matters: Shell, UPS, DHL, and Maersk have been running these systems at production scale for years. The technology is proven. The question for most organizations is how to implement it without creating the fragmented, expensive “franken-systems” that Gartner warns about.

Why most supply chain AI projects underdeliver

On one hand, AI-driven supply chain optimization can reduce transportation costs by 30%, decrease inventory by 25%, and improve forecast accuracy by 75%.

On the other hand, 77% of supply chain professionals still haven’t integrated AI into their operations, and Gartner predicts that 60% of supply chain digital adoption efforts will fail to deliver promised value by 2028.

Three patterns explain why organizations struggle to close the gap between AI’s potential and their own results.

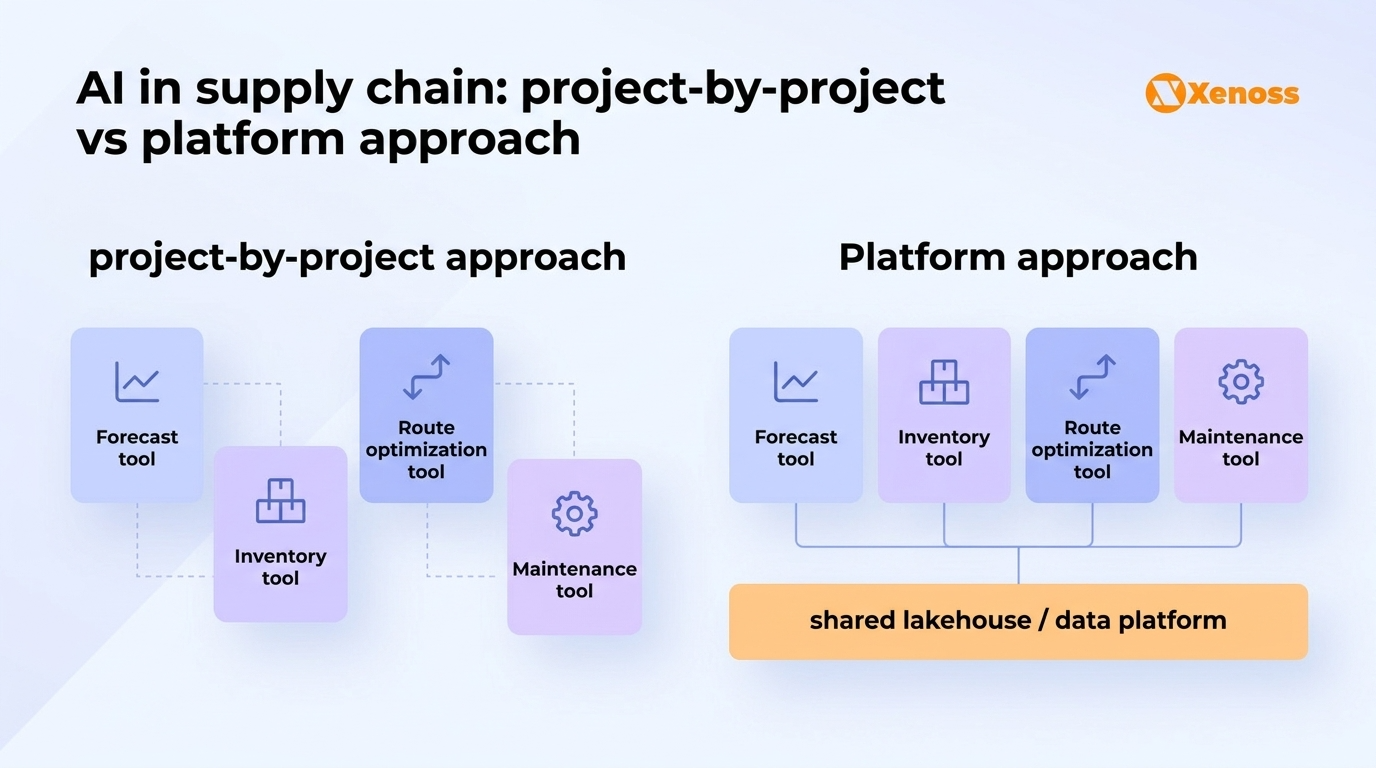

Project-by-project investment without a strategy. Gartner’s survey found that most chief supply chain officers focus on short-term wins rather than building a defined AI investment strategy. Each team picks a tool, solves a narrow problem, and moves on. Over time, the organization accumulates a stack of disconnected point solutions: one for demand planning, another for warehouse optimization, a third for route planning. None of them shares data, models, or governance frameworks. Maintaining the stack costs more than any individual tool saves.

Technology-first, domain-second thinking. Organizations buy a platform because it looks impressive in a demo, then try to fit their supply chain problems into the platform’s capabilities. This is backward. Across Xenoss client engagements, 80% of AI project success comes from proper problem analysis and domain understanding, not from choosing the right vendor. A demand forecasting model trained on generic retail data will not work for a manufacturer with 6-week lead times from China and volatile raw material pricing.

Treating AI as automation, not as a decision system. The most common first move is automating a manual task: generating purchase orders, classifying supplier invoices, producing demand reports. These are valid starting points, but they tap into maybe 10% of AI’s supply chain potential. The real value comes when AI moves from automating tasks to informing decisions: which suppliers to prioritize during a shortage, where to pre-position inventory before a predicted demand spike, and whether to reroute a shipment based on real-time port congestion data.

Why this matters: A Gartner survey of 509 supply chain leaders found that 86% say agentic AI adoption will require new processes for developing talent pipelines. The technology is changing not just what supply chain teams do, but how they are structured. Organizations that treat AI implementation as a procurement exercise (buy tool, plug it in, wait for results) will keep underdelivering.

Why off-the-shelf tools fall short for supply chain optimization

Platforms like SAP Integrated Business Planning, Blue Yonder, and Kinaxis offer solid baseline capabilities for demand planning, inventory optimization, and supply chain visibility. For organizations with standard supply chains and mature data infrastructure, they are a reasonable starting point.

They start breaking down when the supply chain has any of the following characteristics:

Proprietary equipment and sensor data. Manufacturing supply chains generate data from SCADA systems, IoT sensors, PLCs, and custom instrumentation that no off-the-shelf platform natively supports. Shell’s predictive maintenance system processes data from 3 million data streams, not because a vendor offered that capability, but because Shell built custom ML models trained on their specific equipment and failure patterns. Generic platforms lack equipment-specific failure modes, and the connector limitations of standard tools create bottlenecks that only get worse as you add more data sources.

Edge deployment requirements. Warehouse operations, fleet management, and remote manufacturing facilities often need AI models running at the edge, where connectivity is unreliable and latency is unacceptable. Off-the-shelf supply chain platforms are cloud-centric. They assume stable internet, reasonable latency, and centralized compute. For a port terminal processing thousands of container movements per hour, or an oil platform in the North Sea, that assumption does not hold.

Complex business rules and regulatory compliance. Pharmaceutical supply chains must track chain-of-custody for every shipment. Food and beverage companies must manage cold chain integrity with per-SKU temperature thresholds. Defense contractors must enforce ITAR compliance on every logistics decision. These are not features you configure in a vendor dashboard. They are domain-specific rules that need to be embedded in the optimization logic itself, which requires custom development.

Cross-system integration with legacy infrastructure. Most enterprise supply chains run on a patchwork of ERP systems, warehouse management platforms, transportation management systems, and custom databases accumulated over decades. Integrating these systems through a generic AI platform’s pre-built connectors rarely works for the critical data flows. Custom ETL handles proprietary APIs, complex transformation logic, and the real-time streaming requirements that mission-critical supply chain operations demand.

Why this matters: The build vs. buy analysis for supply chain AI consistently favors custom development for the data flows that matter most. Generic tools handle 80% of use cases adequately. The remaining 20%, the use cases that involve proprietary data, edge deployment, or regulatory compliance, are where competitive advantage lives and where off-the-shelf platforms consistently fall short.

What to build custom and what to buy off the shelf

Not every supply chain AI capability needs to be custom-built. The right approach is a layered strategy that combines platform capabilities with custom models where they create the most value.

| Capability | Buy (platform) | Build (custom) |

|---|---|---|

| Demand forecasting | Standard retail/CPG forecasting with clean POS data | Forecasting with proprietary signals (sensor data, IoT, custom market indicators) |

| Inventory optimization | Single-warehouse, standard SKU replenishment | Multi-echelon optimization with cross-border constraints and perishability rules |

| Route optimization | Standard last-mile delivery routing | Multi-modal logistics with real-time port congestion, ITAR compliance, or cold chain monitoring |

| Predictive maintenance | Basic threshold-based alerting | Equipment-specific failure prediction trained on your sensor data and maintenance history |

| Supplier risk assessment | Credit scoring and basic risk profiling | Multi-factor risk scoring with geopolitical signals, ESG data, and proprietary supply network mapping |

| Warehouse automation | Pick/pack optimization for standard layouts | Computer vision quality control, robotic orchestration, edge-deployed sorting logic |

The custom components should share a common data platform and governance framework with the off-the-shelf tools.

This prevents the “franken-system” problem: each piece serves a distinct purpose, but they all read from and write to the same governed data layer. Xenoss engineers typically implement this as a lakehouse architecture where platform tools and custom models co-exist on the same storage and metadata catalog.

Bottom line

Supply chain optimization with AI is not a technology problem anymore. The models work, the compute is available, and the ROI is well-documented. Shell, UPS, DHL, Maersk, and dozens of other organizations have proven that at scale.

The problem is implementation strategy. Only 23% of supply chain organizations have a formal AI strategy. The rest are building disconnected point solutions that add complexity without compounding value. Gartner expects 60% of these digital adoption efforts to fail by 2028, specifically because organizations underinvest in the domain expertise and integration work that makes AI deliver on its promise.

For organizations running complex supply chains with proprietary equipment, regulatory constraints, or legacy infrastructure, the path forward is a layered approach: use platforms for standard capabilities, build custom where your competitive advantage lives, and connect everything through a shared data layer that prevents the “franken-system” accumulation. The 20% of supply chain problems that generic tools cannot solve are worth 80% of the optimization value.