In 2000, a Barron’s cover story asked the question: “When will the Internet bubble burst?” with ‘That unpleasant popping sound is likely to be heard by the end of this year.’ The dot-com crash followed soon after, with most high-profile tech companies suffering dramatic stock losses throughout 2000-2001.

The collapse spread across the technology sector, wiping out trillions in market value and ending the first major internet investment boom.

Now, we are standing at the edge of a different AI bubble, blown by a rapid upshot of AI advancements and billion-dollar ‘hyperscalers’: OpenAI, Anthropic, and many others.

Since OpenAI released ChatGPT in 2022, generative AI has attracted billions in funding and intense corporate attention. ChatGPT became one of the fastest-growing consumer applications in history, with user adoption rates exceeding Google’s early growth trajectory.

Morgan Stanley’s recent estimates claim that AI will bring $920 billion in annual savings to the American economy. As for the long-term impact of the technology, the bank values it between $13 and $16 trillion.

Both Google and Meta beat expected Q2 revenue milestones for 2025, and both attributed a successful quarter to new opportunities unlocked by AI adoption (AI Overviews for Google and ‘greater efficiency and gain across the ad ecosystem’ for Meta).

But the cracks in AI optimism are starting to show.

August 2025 brought several warning signs that triggered market volatility and renewed speculation about whether AI represents an unsustainable bubble that could significantly impact the global economy.

Here are the most quoted ‘reasons’ as to why AI may be a bubble that’s about to pop.

The MIT study: 95% of AI pilots fail

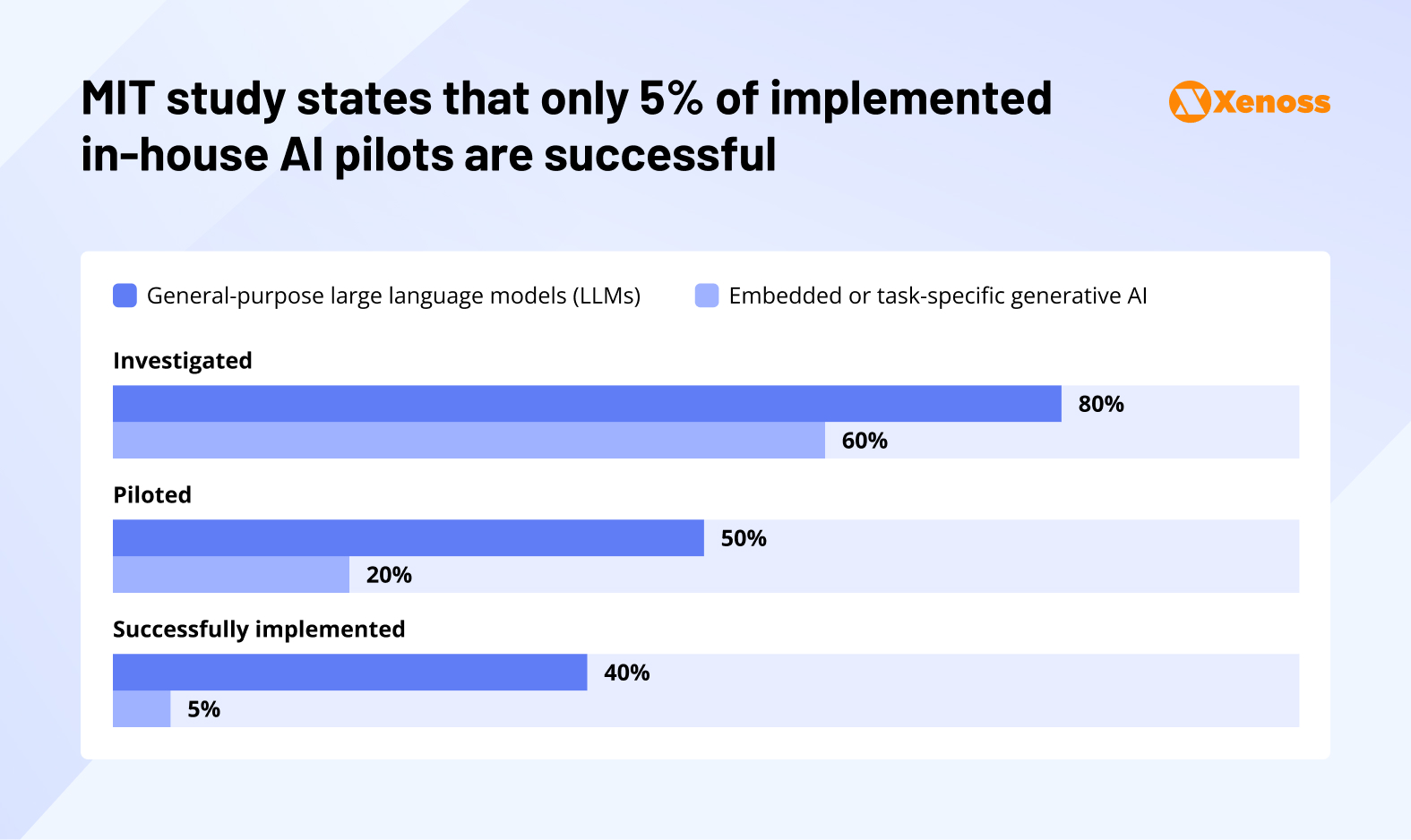

Recently, the NANDA initiative researchers at MIT shared a study of the returns of 300 corporate AI initiatives, and the results are sending ripples across Silicon Valley.

Researchers discovered that 95% of companies get no returns on their AI investments despite having collectively invested around $40 billion into generative AI chatbots, AI agents, and other frontier applications.

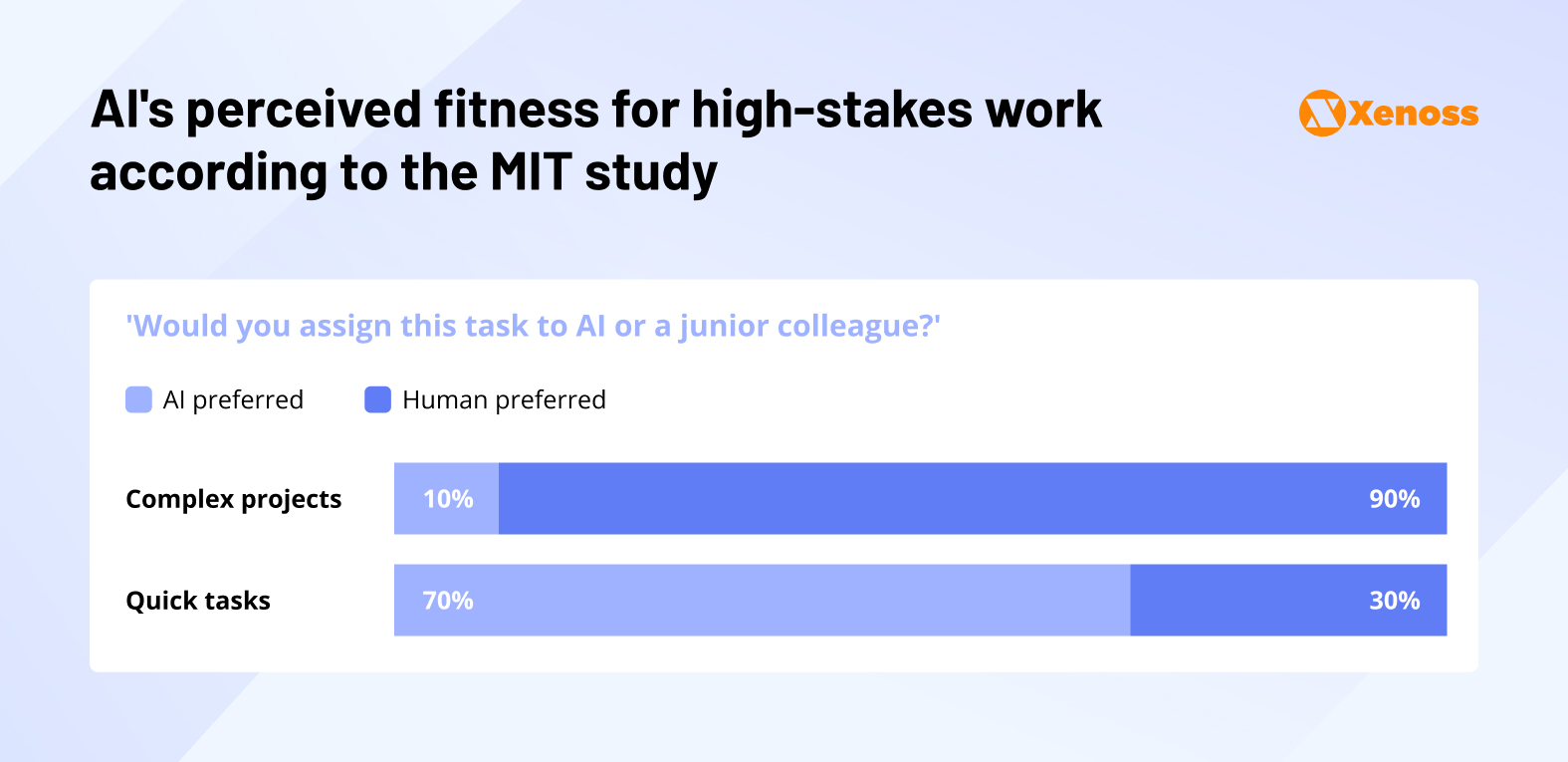

The study also notes that the AI’s impact in the workforce may not be as transformative as executives would like. Among surveyed executives, 90% still prefer humans to handle complex projects, while AI seems to show a fairly high (70%) level of executive confidence in handling quicker tasks.

It’s worth noting that the media coverage of the MIT study is somewhat lacking in nuance. The full study does not enforce the ‘AI doomer’ outlook. To counterbalance grimmer statements, researchers say that employees like using AI for automating their work but prefer keeping it under the radar due to job security concerns.

AI is already transforming work, just not through official channels.

Nonetheless, the cherry-picked bottom line of the MIT study had a powerful ripple effect in the AI community and is now fueling the new wave of bubble concerns.

OpenAI CEO warns of AI bubble despite mixed GPT-5 reception

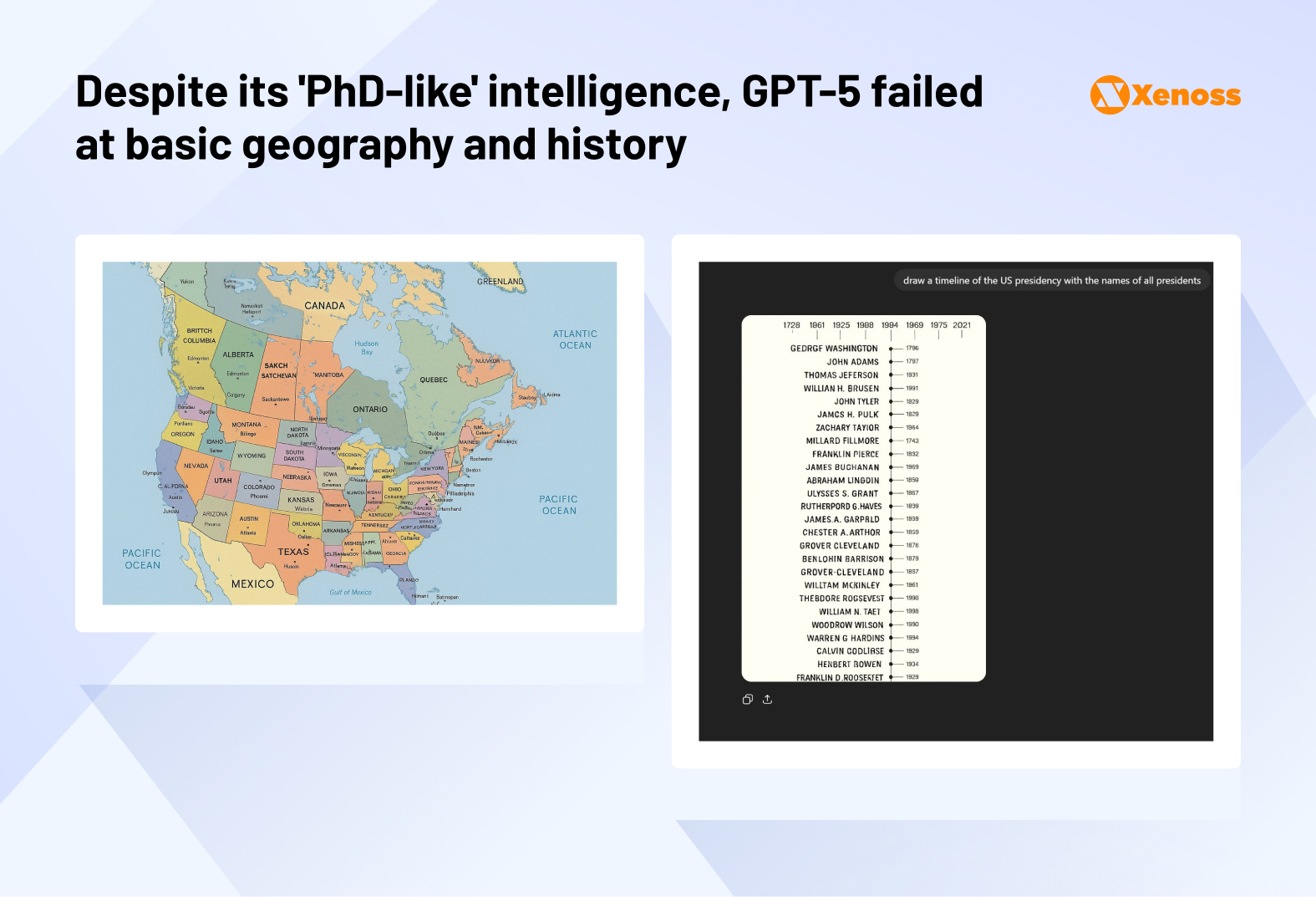

A few weeks before the MIT study, OpenAI unveiled GPT-5, a model that was hyped as near-AGI and a quantum leap in AI history. Before presenting the new ChatGPT to the public, Sam Altman compared the difference between GPT 4o and GPT 5o to ‘talking to a college student vs talking to a PhD’.

But when the Internet got a chance to use GPT5, there was an outcry of disappointment.

People complained about AI generating geographically inaccurate maps. And, when asked to name twelve U.S. presidents, GPT-5 would return made-up names.

Naturally, computer scientists fired back, saying that asking ChatGPT to generate a map of Europe is not proof of its skill to solve complex problems – and in that area, GPT still excels.

However, even those who acknowledged that the model was capable agreed that it was not ground-breaking.

During a private sit-down with the media, journalists asked Sam Altman if he thought AI was a bubble. The OpenAI CEO’s answer was “In my opinion, yes”.

Commenting on the rapid pace at which newly founded AI companies (like Ilya Sutskever’s Safe Superintelligence and Mira Murati’s Thinking Machines Lab) reach billion-dollar valuations, he added, “That’s not rational behavior. Someone’s gonna get burned there, I think.”

And when this warning comes from a person responsible for ‘blowing the bubble’, investors tend to listen.

AI darlings dim on Wall Street

Following the MIT study on AI failing to deliver returns, NVIDIA, a trillion-dollar chipmaker, saw a 3.5% stock decline.

Palantir Technologies, a data mining company that helped support AI projects, faced a 9.4% stock drop.

Meta’s joined the slump with stocks sinking 2.1%.

Meta scales back AI recruitment following market volatility

Last month, Meta’s Superintelligence team made headlines with an aggressive hiring spree, in which Zuckerberg himself hand-picked promising AI engineers, often employed at rival labs, and tried to poach them with above-the-market salary packages.

Some of these defied common sense, like the $1 billion offer Meta made to Thinking Machines Lab cofounders.

But Meta’s latest internal decisions report the freeze of AI hiring. Now the company will hire only a small number of engineers, personally approved by Alexandr Wang, who is leading Meta’s Superintelligence project.

Industry analysts suggest the hiring pullback reflects concerns about market volatility, mixed reception of recent AI launches, and growing investor skepticism about AI valuations.

Investors are facing a ‘reality check’

As he admitted that AI was a bubble, Sam Altman also added, “Are investors overexcited? In my opinion, yes.”

Indeed, private capital investment in AI in 2025 hit an all-time high. American investors poured over $109 billion into AI projects.

They may be the first ‘casualties’ in the AI bubble burst, and the signs are already there.

Following the MIT report, the share prices of SoftBank, one of the leading OpenAI investors, fell 10%.

Despite bubble fears, big tech keeps investing in AI

Despite market fears and grim predictions from analysts, big tech is committed to its AI bets.

Meta recently committed $10 billion into a ‘supercluster’ of data centers in Louisiana.

The project Mark Zuckerberg dubbed ‘Hyperion’ is expected to add over 2 gigawatts (an energy equivalent of four million homes) of computing capacity to Meta’s infrastructure.

The company plans to use this compute to keep its superintelligence research afloat.

In July 2025, Google announced it would invest $25 billion in data centers and AI infrastructure built along the biggest electric grid in the US, as well as an extra $3 billion to modernize two hydropower plants, all to help meet the company’s growing energy demands.

OpenAI’s Sam Altman also alluded to the company investing ‘trillions’ in data centers in the coming years.

The Xenoss take

Several factors can help understand if AI is a bubble and the aftermath of the burst if it is one.

One, where is the ‘ceiling’ for AI? GPT-5’s failure to live up to expectations had many people concerned about how much substantial progress AI can still deliver and whether superintelligence is an attainable goal to begin with.

Two, what’s AI’s potential to add real value to the economy? Is the truth with Morgan Stanley’s optimistic prediction or the sobering statements of the MIT study? If AI fails to deliver on the promises and productivity gains we herald it with, then the multi-billion-dollar investments companies are pouring into it will be in vain.

These concerns warrant closer examination.

AI technical limitations: Are scaling laws reaching their limits?

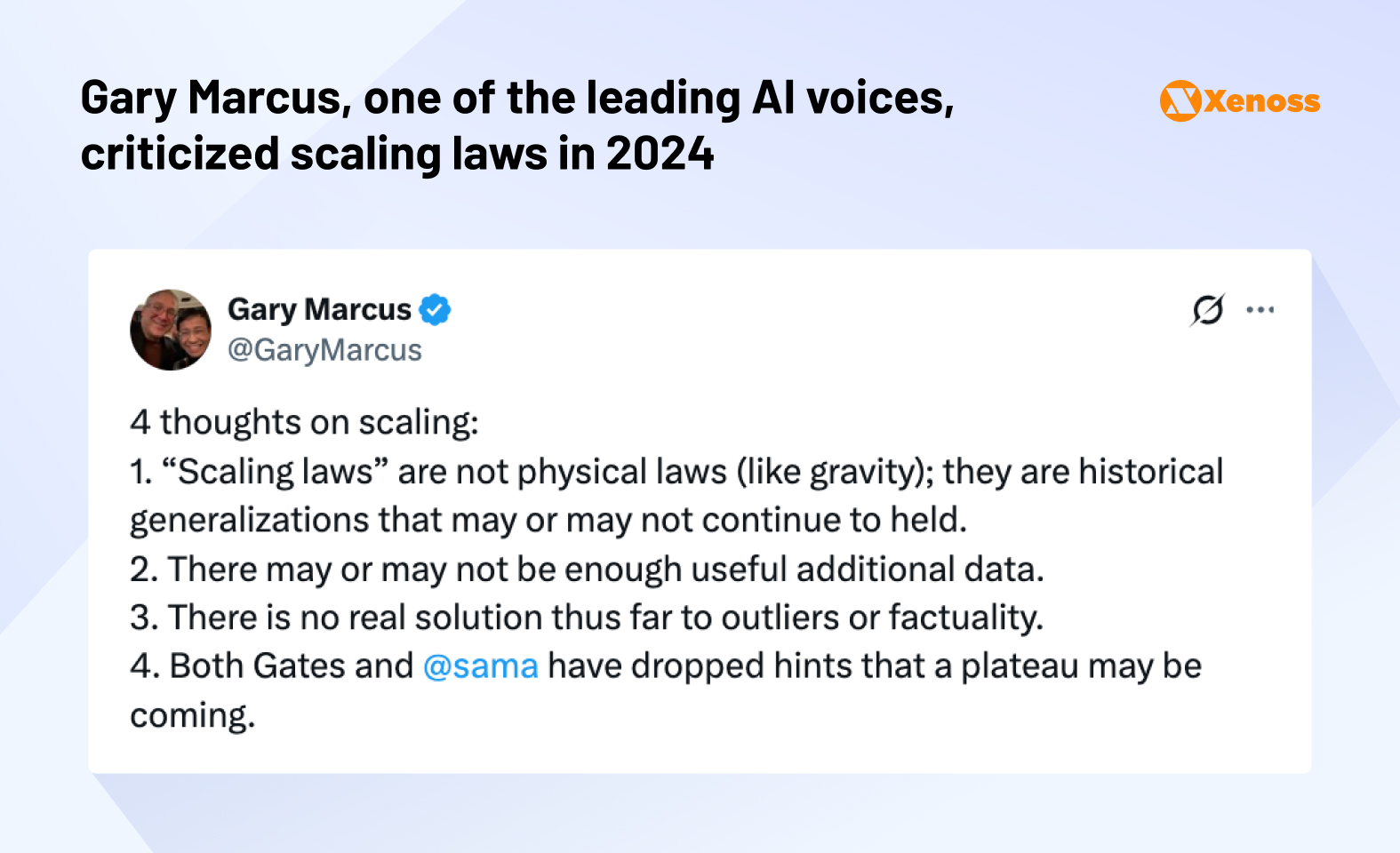

The current wave of progress in AI is traceable to the concept of ‘scaling laws,’ a key idea presented in a paper, ’Scaling Laws for Neural Language Models,’ published by OpenAI in 2020. A simplified version of this research is that more data leads to drastically better outcomes for machine learning models.

OpenAI backed up their claims by releasing GPT-3, which significantly outperformed GPT-2.

Then, the company doubled down on the dataset and reached even better results with GPT-4. The AGI seemed so near that Microsoft published a paper titled ‘Sparks of Artificial General Intelligence,’ but about there, the progress slowed down, and the confidence in scaling laws started waning.

To continue pushing the envelope and create smarter models, AI labs began searching for alternatives.

The most promising one to date is post-training. After a model is already trained and intelligent, its performance can still be tweaked with reinforcement learning techniques, allocating more compute to answering challenging questions, or a dedicated round of training on a smaller subset of tasks.

Post-training took off and gave rise to another layer of next-gen models: GPT-o3 for OpenAI, Claude 3.7 for Anthropic, and Grok 3 for xAI. All the recent models, including GPT-5, heavily lean on post-training.

But, despite being heralded as ‘new scaling laws’, post-training techniques never delivered a quality leap as massive as the one the AI community experienced when GPT-4 was shipped.

Now that scaling laws no longer deliver ground-breaking performance and no revolutionary alternative is in sight, is it safe to say that AI has hit a ceiling?

That would be an immature conclusion, but what machine learning certainly needs now is time. For the better part of 2025, the AI landscape feels like a constant race, with better, bigger, and cheaper models being deployed every other week.

In that mindset, it’s easy to lose track of the field’s foundational problems: memory, context, reliability, governance, multi-modality, and true agentic autonomy.

Economic reality check: AI productivity claims vs. actual results

When it comes to sensational statements like Dario Amodei’s prediction that ‘AI will wipe out entry-level jobs’ in the next five years, it feels like the hype train is moving too fast, indeed. Also, even if Morgan Stanley’s $10-trillion predictions hold, it makes no sense to assume that this innovation will be delivered to everyone in one fell swoop.

It is helpful to understand that implementing AI in corporations is significantly more challenging than deploying general-use models, due to the highly specialized and confidential nature of internal data.

Getting to a point where every major enterprise has a reliable and fully integrated AI ecosystem alone is a few years away. Reaching the stage where complex workflows are executed by AI tools only with no human supervision may never happen.

For now, a realistic take on AI’s impact on the economy seems to be two-fold. On the one hand, yes, it will be a disruptive technology that can increase productivity and become a helpful assistant to white-collar workers.

On the other hand, the hopes for reaching fully reliable superintelligence or 100% autonomous AI agents that require no oversight are most likely far-fetched and likely not to materialize.

As for economists’ concerns about whether the rapid pace of AI funding has the potential to disrupt the market, these concerns do have solid ground.

The ambitious deals tech companies are reporting are heavily dependent on debt financing.

JPMorgan Chase & Mitsubishi UFJ are leading the sale of a $22 billion loan that will be invested in AI infrastructure. Meta’s data center campus in Louisiana is also funded by a loan backed by Pacific Investment Management and Blue Owl Capital.

This eerily mirrors the early 2000s, when early tech companies fueled massive amounts of credit funding into projects with unproven returns and found themselves unprepared for the 2008 market correction.

It’s natural for credit investors to think back to the early 2000s when telecom companies arguably overbuilt and overborrowed, and we saw some significant writedowns on those assets. So, the AI boom certainly raises questions in the medium term around sustainability.

Daniel Sorid, head of U.S. investment-grade credit strategy at Citigroup, in a Fortune article

The solution to this threat comes right out of Finance 101: Asset diversification.

Besides betting on AI hyperscalers that will likely generate revenue in the long term but won’t drive immediate gains, companies should keep financing tried-and-true ‘cash cows’; the cloud, enterprise SaaS licensing, etc.

Bottom line: Are we entering the AI bubble?

The warning signs are there. The AI hype wave fuels unrealistic expectations, which push investors to funnel millions into projects that may not have a durable moat or groundbreaking technology. When the companies’ over-the-top promises inevitably fail, the market is bound to course correct through some stock turbulence.

On the other hand, the power of AI as technology is not a figment of Silicon Valley’s imagination. The machine learning landscape evolves at breakneck speed, and ‘the golden age’ of AI will help it attract more talent, find solutions to the field’s deepest problems, and pioneer impactful AI use cases in finance, healthcare, engineering, and other mission-critical industries.

The promise of superintelligence by the sci-fi playbook may stay unattainable, but AI likely will help eliminate the majority of repetitive tasks focused on data gathering, processing, and organization. That disruption can be powerful, too, and is worth being excited for.